How Do You Actually Ship AI-Generated Code to Production? [Ft. Chris Kelly, Augment Code]

In AI coding, the most fascinating thing I've seen is senior engineers really slow to actually adopt AI because they're sort of hesitant over the quality, probably some fear about losing my job. But for the most part, I've never seen a technical revolution that has been slowly adopted by software engineers. How do you think about engineering and development teams in the future? I give a talk on this topic, how to ship AI quality code to production. The same engineering tools that you have, you got to get to your LLM. And the dirty secret is code review has always been the most important skill. If everything was built using autonomous agents, does that solve anything for the non technical people running DevTeam? Teams? It changes the perspective of, like, what can be done in a certain cycle time. Is there anything keeping you up at night? Anything that makes you nervous about where this trend may be going? There's some Alright. Chris Kelly here, head of product at AugmentCode. Thanks for being here. For having me. Excited to chat. This is about autonomous coding. Tell me, you speak a lot about this. Give me the high level. So autonomous coding is really having agents, AI, write code for us and kind of use all the tools and systems that we would normally use as software engineers manually. Now we've got AI that can drive that and really, you know, kind of accelerate how much code we can produce, what we can even build. And that's like, you know, the future we're starting to see sparks of like what this will like look like going forward. Starting to see is interesting because when you think about the hype that that most people who are maybe not super in on AI, the hype I think is, man, there's just autonomous agents doing all these different jobs out there. Where are we in when it comes to the coding side, where are we with that? Are we really at a point where it is going and autonomously coding things? I think we're going there. You know, there's still a lot of work to go. There's one, a lot of skill that has to be learned on how to work with the agent. It's not just like any software engineer can just pick up an agent and be like, make me this thing in one or two sentences, and it'll make that thing. You have to learn like we have any other technical tool, how to like work with it. And so, you know, in AI coding, the most fascinating thing I've seen is like senior engineers, people like that, consider my peers, have been really slow to actually adopt AI because they're sort of hesitant over the quality. You know, there's probably some fear about like losing my job. But like for the most part, like, I've never seen a technical revolution that has been slowly adopted by software engineers. Like cloud came out and everyone was like, let's do everything cloud. Rust came out and was like, let's rewrite everything in Rust, you know, and AI has been different for that reason. And so there is a lot of hype. I think that has been just like a fascinating experience watching, you know, people that I thought would be like the first to jump on board, be almost the last ones. But we're going there, but I think that hesitation, they're feeling is around the quality of code still really matters. When you're trying to ship code to production, when I'm the one going to get paged at two in the morning, I want to make sure that code works and does what I expect it to do. And so there's a lot of hesitation. So the hype is valid. I just don't think we're there yet where I can just be confident and be like, make me this thing and it'll just do it, you know, carte blanche. So instead of it being, well, there might be some reticence there about losing jobs and all that. You think it's more on the side of, I just don't believe this is going to be as accurate as it would be if I did it myself. Yeah, a hundred percent. Like as a product engineer, you know, in my whole career, you know, I've been trained to be like A plus B always equals C. I've always, that's how I want to write software. That's everything I've ever been trained to do because that way I'm certain it's going to do what I expect to do for my customers. And AI is very different, right? AI is a non deterministic system. And so when it generates code, sometimes it generates code wrong. It's going to do that. And that is very off putting for someone that's been deeply trained in the idea of systems are always supposed to work the way they're supposed to work. And so like, that's where I think the hesitation comes from. And early days, you know, like early days, a couple of years ago, even AI generation code generation was fine. It was okay. But like, it wasn't great. Like if you look back at even, you know, TPT three point five, you know, even, you know, code that was being generated or, you know, memos, were writing emails, It was fine. It was kind of wonky. And we've seen like the acceleration of the quality kind of over the last two years. So I think a lot of people had some early experiences that probably got in their way. And now they're like hesitant to like get their foot back in. When now I think is the time when it's the most amazing time to be an engineer using AI. So you got the curve spiking up in terms of the quality. Is adoption spiking up at the same rate, think? And are there any are there any, pockets or types of businesses or types of people that you see using it more than Yeah. So I think adoptions definitely took a turn. You know, when Anthropic came out with Claude four, we really saw well, three point seven really was like a spike in the quality of code that was being generated. And then Claude Code came out in, you know, earlier this year. And that's where I saw my peers starting to like pick up agentic coding and really feeling that what it could do. And so we've definitely turned that corner. When it comes to like, what kinds of engineers, you know, I, I don't think there's one specific pocket. There's not like front end engineers are having more success or less success. It's really about like, how willing are you to learn this new tool? And, you know, we see it from independent, you know, individual hackers all the way through, you know, big organizations, because there's individual hackers that have been putting Linux boxes underneath desks forty years anyway. And so it's like that hacker mentality of who wants to like work on this thing that exists everywhere in software engineering. And that's what that's always been the driver for new tools and new adoption is like the individual developer that's like, I just want this thing to work. And they figure out how to make it work for them. And then it just spreads from there. Was there a moment when you were working with it that you had like, whether it's an moment or whatever of like, oh, ****. This works. Like, this is gonna be it. Like, we're, this is going to be the thing we're going towards. Yeah. And so, you know, code completion, you know, tab complete kind of thing, thought it was always good and I enjoyed working with it. It sped me up a little bit. Once we got to agentic coding, that's when I was like, oh, this is pretty neat. And I probably still hadn't used it yet, but I was starting to push internally a desire to drive a, create a CLI, which is like a terminal based agent, similar to Cloud Code. And I was really pushing that forward and prototyping with one of our co founders. My highlight was like, once I got that to be able to write code for itself. So it got good enough to be able to start, you know, improving itself, you know, and then I would refresh, get the new code, you know, loaded in. And that was a really amazing experience from like, this is cool. To be able to like write software that writes itself. And, you know, I'm still in charge of how I'm laying out the code, all of the code that's being written. I'm still reviewing all of it, but like that was a, just a real spark for me and still driving today to be able to do that. Yeah. What about for anybody that hasn't used it or maybe is listening and isn't into code, like, are the maybe any any of the most exciting use cases you can think of? Is it like, there's an issue in GitHub and I press a button and then it's gonna go solve it? Or like, what are, what is the best example of a workflow that would just blow somebody's mind? Yeah. So I think that's a good start. So automation in general, broadly defined, you know, right now we've been doing a lot of coding and IDEs and clients. There's code lives in so many other places than just your IDE, right? Like, it runs in your CI systems, it runs in production, you know, you get an alert from it, and there's so much potential for what AI can do in the rest of that software development life cycle, not just the individual coding portion. That's really exciting. And I think the, you know, GitHub issue or linear issue to like a PR is where people can start to see like the real potential of automation, sort of like, that's like very light automation. But imagine a world where I trigger that linear, that ticket, it solves it. It opens a PR, it runs my test suite. It finds a failing test. It fixes itself In the end, it's like, Oh, I can fix these tests. So it does that. Those tests pass. It moves into a, you know, a red or a green, blue deploy. So like some of my users are starting to see it's monitoring the monitoring system to make sure everything's green. And then it starts to roll it out further. Like you can see a world where the entire software cycle is done by AI and it's really just inputs of like, put in the ticket of what I want it to do and then it'll go and do those things. And so, yeah, so linear, you know, GitHub issue to a PR is like just the tip of the iceberg of what that entire like ecosystem is going to look like in, you know, a few years. For so long, I was amazing at writing Google Sheets formulas. I am horrendous at it now because I go to you just go to Sure. I tell what I need to do. I even, like, screenshot of the, you know, of the the fields and the columns and rows, it's like, write me a a script. And what I found is that it is a decent amount of work to go back and forth to be like, it's writing this this long formula. I paste it in. It doesn't work. I have to screenshot her and say it didn't work. You go, oh, okay. Tries it again. Oh, that's right. You're in Google Sheets, not Excel. You're talking about something where this is fully contained, where it's seeing the errors. It's writing the code, seeing the errors, correcting itself, and you don't have that human in the loop doing that part. Yeah. That's the, like, you asked earlier about how, what, you know, what if people need to know about like using agents well? And we think that like code generation should just happen magically, right? Like, Oh, I, you know, I asked to do this thing. It spitted out a bunch of code that it was all perfect. I'd never personally one shot at a piece of code in my life. Right. And I've been writing code for twenty years. And so, but I have all these other tools at my disposal. I have linters. I have test suites. I have, you know, IntelliSense and my editor that kind of all help me make sure that the code I'm writing is functionally correct. And we sort of decided for a little while that, oh, we shouldn't give our agents these tools, but you give these agents the same tools I have as a software developer. And that's when they can actually do all the same work that a software engineer can do when it comes to writing the code. Like, because they can write now very functionally correct code because they have the same tools that I do. And so that's been like, I think the game changer and my number one tip for engineers that are like, I don't get the quality that I want out of this. It's probably because you're not giving it all the things that you have access to. And why would you expect sort of a magic box to be able to generate code without, without all of that same sort of context and information? So that's the interesting part, right? You said tools, but you also have the context. You have everything that you know about the business and the problem and all this historical knowledge in your brain. How do you provide that context to the the agent? Is it through MCPs to different platforms? Is it reliant on the data quality and not having old, outdated, you know, SOPs or anything in in place? Yeah. So traditionally, you know, if I'm, if I pick up a ticket, I'm going to spend a couple hours maybe reading a bunch of code, reading some docs, talking to maybe some people to get myself, like get that system into my head so then I can spend twenty minutes making an actual change, right? Like deeply inefficient, use of time. With, with agents, you want to get them that same context. They need to understand the system, the code base. So some agents will, you know, grep around your code base, you know, searching your files for trying to figure out what it needs to do. For Augment, we actually do this very differently. We have our own retrieval model that indexes your code. And so then we have some real deep semantic understanding of your code base that nobody else can, currently doing. And that's the context that I would normally have as an engineer, because I know the kind of, the code base patterns I'm trying to replicate. I know how different like systems interact with each other. And that semantic understanding is so much different than just like grepping around a code base, right? Like imagine if you're just searching for a string, you can only get so much knowledge that way. So for Augment, we basically do all of that. Let me load all of this into your brain for you on every single request. And then we can show the generative model that, you know, that LLM, all of the right context for that specific request every single time. And we find that we get much better results on the code quality. That's what our customers say, you know, it's a hard thing to demonstrate in like one minute. But you have to be familiar with your code base, but for like when senior engineers start to use Augment, they're like, oh, this thing knows things only I knew about. We had a customer. He, the way he vetted the product was he would get, take all the questions from Slack that you'd get from his like more junior engineers. He was like the head of a technical area and he would just feed them directly to Augment. And if Augment can answer the question, same way he would answer the question, he's like, this is, this is the thing. And so that's how he figured out like Augment knows more about my code base than even I possibly could. So yeah, context makes a hundred percent of the difference on what, what a good LLM can generate versus a bad, a bad one. Yeah. Have you seen it go awry anywhere with, you know, this autonomous coding? Oh, sure. You know, it still hallucinates like LLMs are non deterministic. It can hallucinate sort of weird things. You know, there's the, if you're ever familiar with a strawberry problem, when you ask an LLM, how many Rs are in strawberry? It doesn't know how to like, it doesn't actually know how to break down the word into individual characters because of way LLMs work into tokens. So like there's those kinds of problems. You know, we weirdly, we had this, we were working on a Slack, we have a Slack bot and we were sort of reworking it to be more agentic. And so we had two versions of our Slack bot running in our Slack instance at the same time, our old one and our new one. And they started talking to each other. They started replying to the same thread and went into basically an infinite loop of like replying, asking questions to each other. And you're like, stop. Everybody just stopped. And there was like, literally had to kill it. He'll kill the processes on the back end. And you saw it live and slapped. Oh yeah. You were like, you could just watch this thing talking to itself and people are like, how do I make it stop? Resource servers know like stop talking, functionality. You talk to any LL and it's always going to get the last word. Yeah. And LLMs are a little aggressive. They want to be overly helpful. And so in our, you know, our Slack bot was a little assertive of like, I want to make sure I'm always answering last, but when there's two of them, then you've got you know, you're spiraling out of control. Slack, you know, talking about our code base with each other. Yeah. Okay. So let's say this picks up, becomes wildly adopted. How do you think about engineering and development teams in the future? Are they hiring people that are more code review than they are people that are like I mean, if the code component of it isn't there, what is it? Architecture and planning and code review? I think that's the dirty little secret. You know? I give a talk on this topic, you know, on how to ship AI quality code to production. And first it's, you need the right tools for, you know, that the same engineering tools that you have, got to give to your LLM. And then code review is the most important skill. And the dirty secret is code reviews always been the most important skill. I, as an engineer, I'm almost always reading code more than I'm actually writing code. You know, I'm again, like a 10x number of reading versus writing. And so being really good at code review is really, really important. And we sort of not forgot that, but we always kind of brush that off to the side as engineering orgs. And that's always been the case. But I think it's even more prevalent now, right? If you're, if you're having a linear issue trigger an agent to like make a PR and I'm doing that five times a day, that's a lot of code I've got to review. And so my own personal workflow is like, don't write any individual lines of code, almost no individual lines of code anymore. I have the agent do work. I have a, you know, I'm looking at the code, the source control view in Versus Code. And so I can audit what little bits of code it did generate. I staged those changes. I basically save them, ready to go. And then I have the agent do another round and I'm just, I'm constantly in this like code review cycle, like cycle. And I think for, you know, engineering managers, the thing they should be hiring for and have always should have been hiring for is good code review. I've actually never seen a good code review, like interview question. And I think that's probably the most important thing. And if anyone wants a startup idea, I actually think our code review tools today are not great. You know, I mean like as a human, the interfaces on how I look at code and I think about what's changes are and why those changes were made. They're not great. And so I want somebody to help me, you know, rethink just that interface on doing really great code review as a human, because we're going to do so much more of it. And you know, and the IDE is just the home we live in right now, but I think, you know, we're even seeing some signals in the market of like, engineers aren't even going to be living in an IDE. You know, I only live in one because I want the source control view. But if I didn't, if I could get that in some other form, I'd have the terminal agent, you know, Auggie CLI, and then a code review tool. And it's like, I'm happy that I can do everything I need to do just like this. Was Augment code built? Like was your code built using the same type of agent base? Yeah. When we first started agents weren't a thing, but so we started with, you know, tab completions. That's where the market was at the time. So we'd certainly did that. And then once agents came out, you know, everyone on our team uses agents, in different, you know, we have clients for, you know, Versus Code, IntelliJ, CLI. So like whatever, whatever vehicle they want to use it in, we have an agent for that spot. And so, we write most of our code using agents today. Okay. So I have a question that's a bit selfish and maybe it's applicable to anybody watching or listening. I am not super technical. I have had instances where I've had devs, engineering teams under me, we're building a product or it's something that I'm overseeing, and it's always a pain point to just not really know how long something should or shouldn't take. So you've got an engineer telling you something's gonna take five weeks, and you gotta push back and you're like, really? And it's very hard to challenge because as soon as they get into the technicalities of it, you just don't know. Does if everything was built using autonomous agents, does that solve anything for the non technical people running dead teams? It changes our, the perspective of like what can be done in a certain cycle time. Right? So, you know, I think you could probably chop off an order of magnitude. So like a five week project is now, you know, what is that? Three and a half days, right? Like that's just the sheer volume of code that we can generate. But it's never really been like coding has been the, like the long part, but it's not the hard part. The hard part is the thinking through the system. So there's only so much shortcutting we can do there. When it comes to like estimating how much time a thing could take, LMs aren't great estimators, you know, because they're just, they're text generation machines. So they're gonna, you know, they're going to use all of the references that they've ever been trained on to say like, well, this project should take eight and a half weeks. And, you know, I, every time I make a plan that I use plan driven development, you know, it always has some like caption on the end of like, here's the development time. It's like weeks one and two, do this weeks, four and five, do that. And you know, it's like, okay, this is an eight week project. I'm like, I'm going to be done with this probably by the end of the day and have some PRs open for it. So like LMs themselves are not great estimators, but as a non technical person, can, you can start to like scope down the sheer volume of time it takes to, to work on code. That doesn't absolve us from still having to understand their system, the system design, the data flow, the user requirements. There's just a ton of work that still goes into building software. Yeah. Other than just generating code. Code has always just been an artifact of the work. You know, someone that's doing a punch card is writing code in a different form than someone that is writing, you know, a high level programming language today. But the work was really about thinking through the system and the problem and the data and not generating a bunch of code. And so, the five, that five week time is now much less about the actual coding exercise and much more about like, how long is it going to take you to get the definitions of what you want to build, who you're building for, those things. So, yeah. Makes sense. Is there anything keeping you up at night? Anything that makes you nervous about where this trend may be going? You know, I think there's some, you know, skill atrophy that happens. Like you mentioned it, like how do you, you know, you wrote a bunch of, you know, Google Sheet formulas. I, you know, I don't generate, I don't write a lot of code anymore. But I have a, you know, a long background of writing a bunch of code. So for junior engineers that don't have a ton of experience writing code, and like the problems that come up with writing code, learning how to like read code and understand code is going to be a challenge. Like, I don't know how you do. I don't have an answer for this yet. I don't know how you do that if you don't just get hands on keyboard for a long time and understanding the trade offs that code has. Cause that's really what you're doing as a software engineer is making a bunch of trade offs of like, I can write code this way or that way, both are functionally correct, but like they come with certain decisions and baggage. And if you're not, if you don't have the experience, I don't know if you, how you gain that experience, if the agent's doing a lot of that work. So, you know, making sure as someone that's, you know, learning how to code, that you're asking the agent to explain the code and think through it, you know, seeing different scenarios is probably the best way. That's probably my biggest fear. And, you know, if I, you know, get on planes a lot for, you know, conferences and events and whatnot, when I don't have, you know, airplane wifi is not great. And so, you know, if I'm doing agentic coding, I'm like, wait, you want me to actually like open a file and like write code? Because I, you know, the Wi Fi is just too terrible. So my own like comfort level in writing handwriting everything has definitely, you know, taken a hit this year. So it's not like when the calculator came out and we all said, we don't need to learn how to do long division anymore. We got this thing. There's going to be a problem if we do not have people that understand how to write, because how are they going be able to do code review? Yeah. The reality is, you know, we're still writing code. LLMs are still generating the same code that we were generating five years ago, just doing it at a much faster pace. And so production systems still run the same way. All of those things, none of that has changed yet. And I think they will. But right now we've built mostly a faster horse and we haven't yet invented the combustion engine to like move us out of, you know, so yeah, like the skill of what code does, the trade offs, the architecture decisions, you know, a microservice architecture is so much different than a monolith and, and how those sort of like those systems interplay with each other that you still have to understand the code. You can't just, you know, you know, the talk I give a lot is that, you know, vibes won't cut it because you can't just let it go. You can for certain things, you know, maybe an internal tool, little like hobby project, whatnot. But if you want to ship code to production, that's going to have users, then you need to understand it because not yet. AI isn't going to, can't answer that page and sort of debug a, you know, a complex system. Yeah. It's twenty thirty. This all gets wildly crazy adoption rate. What does twenty thirty look like? So, yeah, kind of alluded to it. I think that's, you know, where we might get to is a world where you can sort of trigger an agent based upon some kind of input, right? So like I want to build a feature or, you know, a user's requested something. You can have the entire software development life cycle kind of encapsulated through agents all the way through it. So both planning the feature through coding the feature, testing that feature, through running it and operating in a production. When it fails, it gets an alert, an agent audits that alert and decides what fix needs to happen. And just like that's now the new input for the next cycle of this. And then you've got agents running software all the time, and building all that software. So there's just going to be so much more software. And if you imagine our world today, there's honestly like not that much software in it relative to the way we, you know, you read any, I'm a sci fi reader. If you read sci fi, there's software in everything. So we need a world where we can put software in everything and we can't all maintain that. And there's just a lot of software still to be built, but I do see a world where agents can do every one of those phases of software development. And then everybody kind of gets, if you have that, then everybody gets custom software. You get, you know, instead of buying some off the shelf software that sort of solves your problem, you can have a system that's built just for you the way your business wants to operate, we know the, your sort of requirements. And that's, I think a really exciting time, to see like what new kinds of software we're even gonna build. That's how we get to the combustion engine from the from the courses we're at now. Love it. Alright. Chris Kelly, head of product AugmentCode. Thanks for coming by. Yeah. Thanks a lot, Frank. Appreciate it.

How Do You Actually Ship AI-Generated Code to Production? [Ft. Chris Kelly, Augment Code]

The engineers most resistant to AI coding tools are not the junior ones. Chris Kelly, Head of Product at Augment Code, has watched senior engineers, people with 20 years of experience shipping production systems, be the last to adopt. The reason is not fear of job loss. They were trained to build deterministic systems where A plus B always equals C, and a non-deterministic model that occasionally writes wrong code breaks a contract they have never had to question.

The quality gap is not a model problem, it is a context problem. Most teams point agents at a codebase without giving them the same linters, test suites, and tooling a human engineer relies on, then wonder why the output does not hold up in production. Chris breaks down the exact daily workflow he runs, where he writes almost no individual lines of code himself, how semantic retrieval changes what an agent actually understands about your codebase versus basic file search, and why the bottleneck for non-technical leaders compressing dev timelines was never the coding to begin with.

Topics Discussed:

- Why senior engineers are last to adopt AI coding tools

- Giving agents linters and test suites to close the production quality gap

- Semantic codebase retrieval vs. grepping as a context strategy

- Chris's continuous code review workflow replacing individual code writing

- Why coding was never the long part and what actually compresses with AI

- Skill atrophy risk for engineers skipping hands-on coding experience

- Code review as the highest-leverage engineering skill to hire for now

Augment Code is a developer productivity platform built to give AI agents deep semantic understanding of enterprise codebases. Where most AI coding tools rely on surface-level file search, Augment uses its own retrieval model to index a codebase and build genuine understanding of how systems, patterns, and components interact, so the generative model receives precisely relevant context on every request rather than raw file dumps. The result is AI-generated code that reflects how the existing codebase actually works, across VS Code, IntelliJ, and CLI environments, built on the principle that agents need the same tools a human engineer relies on, including linters, test suites, and full codebase context, to produce code that holds up in production.

So, autonomous coding is really having agents AI, write code for us and kind of use all the tools and systems that we would normally use as software engineers manually. Now, we've got AI that can drive that and really, you know, kind of accelerate how much code we can produce, what we can even build. And that's like, you know, the future we're starting to see sparks of like what this will like look like going forward.

You know, in AI coding, the most fascinating thing I've seen is, like, senior engineers, people like that I consider my peers, have been really slow to actually adopt AI because they're sort of hesitant over the quality. There's probably some fear about, like, losing my job. But, like, for the most part, it's, like, I've never seen a technical revolution that has been slowly adopted by software engineers. Like, cloud came out, and everyone was like, let's do everything cloud. Rust came out. Everyone was like, let's rewrite everything in Rust. And AI has been different for that reason, and so there is a lot of hype, and I think that has been just like a fascinating experience watching, you know, people that I thought would be like the first to jump on board be almost the last ones.

How do you think about engineering and development teams in the future? Are they hiring people that are more code review than they are people that are, like I mean, if the code component of it isn't there, what is it? Architecture and planning and code review? I think that's the dirty little secret. You know? I give a talk on this topic, you know, on how to ship AI quality code to production. And first, it's the same engineering tools that you have, you got to give to your LLM. And then code review is the most important skill. And the dirty secret is code review's always been the most important skill.

If everything was built using autonomous agents, does that solve anything for the nontechnical people running dev teams? It changes the perspective of, like, what can be done in a certain cycle time. I think you could probably chop off an order of magnitude. So like a five week project is now three and a half days. Right? Like that's just the sheer volume of code that we can generate. Coding has been the like the long part, but it's not the hard part. The hard part is the thinking through the system.

Is there anything keeping you up at night? Anything that makes you nervous about where this trend may be going? You know, I think there's some, you know, skill atrophy that happens. I don't write a lot of code anymore, but I have a, you know, a long background of writing a bunch of code. So for junior engineers that don't have a ton of experience writing code and, like, the problems that come up with writing code, learning how to, like, read code and understand code is going to be a challenge. I don't have an answer for this yet. I don't know how you do that if you don't just get hands on keyboard for a long time and understanding the trade offs that code has. But that's really what you're doing as a software engineer is making a bunch of trade off.

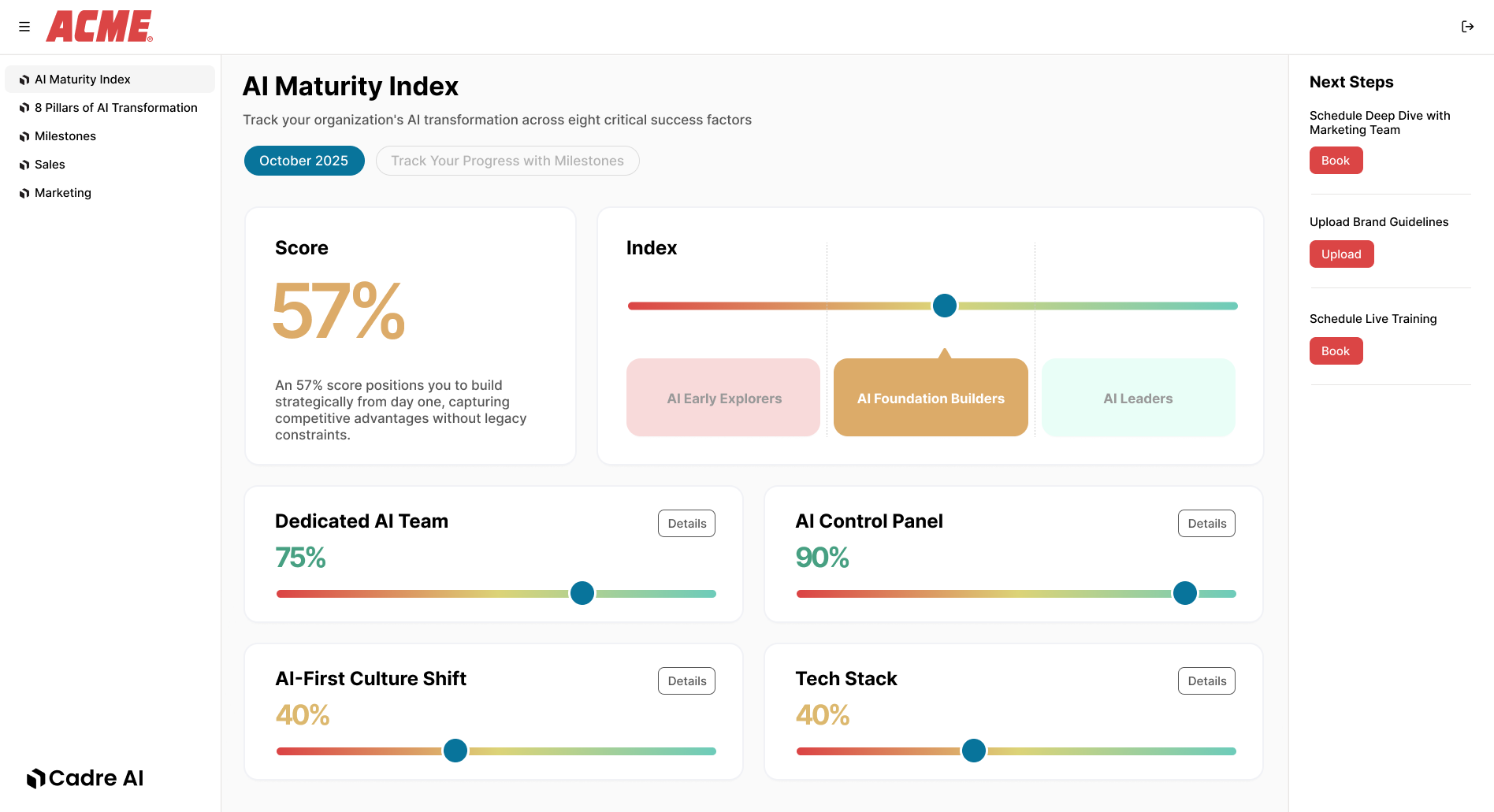

Track your AI results