Are Specialized Language Models the Future of AI? [Ft. Iddo Gino, Datawizz]

For other founders of early stage companies, especially young, any lessons learned that you'd like to give them? Find something that you're really excited about building and a product that you really enjoy working on. The amount of dollars spent on APIs in the first half of twenty twenty five was, like, eight billion dollars, and that is double what the entire twenty twenty four was. What's driving this? The only way you innovate is when you're kind of under threat. There is, like, a good chance that in ten years, like, your business is either gonna radically change or be entirely gone, and I think that that's actually been one of the biggest driver for innovation, and that's driving a huge amount of spend. What does the world look like in twenty thirty if this gets, like, adopted? You kinda Alright. We have Ito Gino here, founder and CEO of Datawiz. Welcome. Thank you. Thanks for having me. I know we're gonna talk about a lot of stuff today, but I wanna start with just the idea of LLMs. We've got everyone knows what they are. We've got them, you know, different ones trading places, it seems like, weekly, as now Gemini comes out with this new model. Everyone's raving about it. Now ChatGPT is towards the bottom, and then you got all so I think there's a lot of people banking on LLMs being the future of AI. Do do you have any polymarket positions on leading LLMs? I don't. You don't? No. It that seems like the the thing to to do right now. I don't think there's another technology where, like, the pace of new models or new versions of something coming out has ever been this fast. Yeah. And, yeah, it's been it's been a fun industry to build in. Definitely, after coming I mean, as you kinda know from my background, spent close to a decade in the APIs integration space, which is great, but also just a lot of legacy and a lot of technologies that are very kinda slow moving and and hard to shift quickly. So your background in the API space, actually, should say you were seventeen when you founded RapidAPI. Guilty as charged. By twenty four, you were a founder of a unicorn. How did that feel? I don't know. It was great to build. I think I I enjoyed the the journey. Yeah. I don't know that I have much Actually, who was the company at that point when you hit unicorn status? I think we're about two so, I mean, the the kind of metrics that we usually bring up, we're about two hundred people on the team. Okay. We were doing at that point a few hundreds of billions of API calls per week. So, yeah, already powering some some pretty massive companies. I think at that point, we're probably looking at fifty or sixty percent of Fortune five hundreds that had some sort of deployment on on rapid, which is probably the metric that I, in retrospect, was the most excited about. Any just for before we get into too deep on LLMs, for other founders of early stage companies, especially young, any lessons learned that you'd like to give them? Can talk a lot about what not to do, probably more than than what to do, but I think it's I don't Find something that you're really excited about building and a product that you really enjoy working on because it's the whoever said it's like kinda chewing glass and stirring into the abyss, it definitely feels like that more often than not. I feel like if you don't enjoy the baseline product that you're working on, it kinda can get very, very difficult at times. So seventeen, you started yet, I would imagine, you're you start ramping up employees by year two. When did you get to a point where you had to hire kind of the executive level where it's not just, like, doers under you? You start to have, like, layers in the middle. I think I mean, we did it pretty quickly, probably a little too quickly, I think. So there's kind of a point in time, I think, general in in how people thought about building companies where it's like, you know, you get the the young kind of founder. They they build the product. They build the initial kind of thing that's working. And then as you kind of wanna grow up the company and mature it, you bring a bunch of seasoned executives. And that was very much my approach into how we build a company, and we kind of got something working. It's like, let's just bring in a bunch of people who've seen it, done it before, have really impressive resumes, and can kind of help us scale. I think that's a way of doing it. I think that now one of the cool things actually with AI is you look at a lot of the companies, and because the space is so new, I think it gave a lot of founders kind of license. There isn't really a huge talent pool of seasoned AI executives to pull from. Right? If you're building Cursor, it's not there is a huge pool of people who have built AI coding agents before. So I think that it kinda gave a lot of people license to not go that route and actually experiment with just, you know, what if we bring a lot of young, ambitious people who are kinda figuring it out for the first time, but, like, guess what? Every startup is different, so everybody's figuring it out for the first time. In terms that you can actually scale pretty meaningfully that way and and arguably better than the the kind of old route. So that's been really cool to see. It's wild. We're at Cadre AI. We're a AI consulting and implementation. I look around, and I'm like, these kids look like they're twelve years old in some cases. And these are the there's no school for it. These are the people that just got out of college, whether it's, you know, engineering or otherwise, and just learned this on their own. Like, these are, the pioneers of the space. Yeah. And and I think that you kinda have a space that, like, really like, a lot of the terminology, the tools, the open source, the frameworks that we like, you know, sure sure a lot of this has foundation that kinda go that that are older, but, like, a lot of the day to day tools that you end up using in AI, like, were all created over the last three years. Yeah. It's truly kind of the ultimate experience. Like, experience really kind of caps off fairly quickly. And I think so, obviously, I I dropped out of school to start RapidAPI, and I think that we, back then, had the thought that there's a lot more that you can learn by doing than by, you know, sitting in a classroom, and I think AI is kind of proving that in a lot of ways. Okay. So you've got you're a young founder. Did you have impostor syndrome? Did you have that period where you're going, I I I should not be doing this. This person I just hired who's seen this movie before should be doing this. Absolutely. And I think, you know, it's natural, and you bring in people who've kinda and we brought in truly, like, you know, phenomenal executives at Rapid, people who've built some great companies before. And I definitely think that you end up sitting next to them, it's like, you know, this person has seen the movie, has written the movie, has played the movie numerous times before. It ends up becoming kind of hard to, to kinda go against the grain in a lot of ways. So even as founder, you're saying you found instances where you'd go, I really think we should go this other route, but everyone else is telling me that this way is the go, the way to go, and I'm having a hard time pushing back. Yeah. And I think it's it's always about like, and and I'd say, you know, I think that you kinda gotta remember, like, every company is is different, and every product is different, and every go to market motion is different. So there's probably gonna be I I think at at Rapid, when I think about the times when we try to do something kind of against the the kind of common wisdom and or how you typically do it, probably sixty percent of the time we end up kind of realizing that there is a way that that that's why that's how it's done and kind of falling back to doing it that way. There is and and even in that sixty percent, there's probably something pretty nice about kind of proving it for yourself from from first principles and and and kind of relearning how to do the thing. But I think on the other forty percent, those were actually really meaningful where we did something very different from how the market typically does does it. It ended up being very much for the better at the company level. Certainly with data ways, especially again, because we're in the AI space and there's far less prior art, it's definitely been more of the structure of let's try and prove everything back from or or build everything back from from first principles and not necessarily just try to chase the the kind of common wisdom or how you typically build startups. Okay. So you had this background in in APIs. Now I think one of the recent stats I read was, like, the amount of dollars spent on APIs in the first half of twenty twenty five was, like, eight billion dollars, which is double I think that's just AI like, LLM APIs, pretty much. Yeah. So just And I think that's just that's just enterprise, actually. That's wild. And so that's for the first half of twenty twenty five, and that is double what the entire twenty twenty four was. What's driving this? So there's obviously a huge kind of race to to apply AI every and and this is an enterprise metric, so we can probably talk about the enterprise, but there is a huge kind of drive to go and apply AI everywhere. I think you know, so Reid, the the founder of of Netflix once kind of said when when he talked about Netflix and how they've been able to continuously in it, like going from from DVDs to to streaming, and then streaming other people's content to streaming their own content, and he talked about, yeah, the only way that you innovate I'm probably butching the the quote, but, like, the only way you innovate is when you're kind of under threats. And I think that AI kind of took every company out there and put them in this, you know, you're under threats. There is a good chance that in ten years your business is either going to radically change or be entirely gone, and I think that that's actually been one of the biggest driver for innovation. So now people are basically realizing it's you take that technology and apply it everywhere or you end up dying, and that's driving a huge amount of spend. I think, obviously, you kind of look at some of the recent studies. So there was this MIT study that looked at some of the enterprise AI initiatives and saw that the vast majority are unsuccessful. Ninety percent. Ninety percent. I think it was even yeah. But I think a lot of people kind of took the wrong conclusion because there's like obviously, yeah, ninety percent are wrong, and I think that there is a lot of learnings that we can do or that we can take away from that and how we build those AI initiatives better now to kind of reduce the false positive or the false start, so to speak. But I also think that when you look at that number, if you actually look at the five to ten percent that are successful, the amount of impact that they're already having at how those businesses are running their day to day processes is huge. And I think that those five to ten percent just show how impactful those initiatives can be. For a lot of people, the conclusion was, Oh, maybe we shouldn't be applying AI or maybe AI is not as big. I just think we've been kind of doing it wrong. Makes sense. It's a three year old technology. It's gonna take us a while to figure out how to apply that thing into large, year old companies. But if you actually look at where it's been successful, it's been incredibly successful. And even, like, looking at you think you take the stat at face value, ninety percent are are failing. What does failing mean? Well, I would think failing in this case, based on what I I read on it, means they have it didn't they didn't fully adopt it. It didn't change that human workflow that they had to an extent where the humans are using it all the time. So it's really an adoption problem. You get AI that doesn't give you a good answer once or twice, and you're like, well, this thing sucks, versus going, well, we gotta fine tune this thing more and, like, really focus on it. If you also think about, like, the the free years of AI, if you look at the model improvement and just the sheer capability that you can get out of the models, it's probably been increasing like this. So a lot of these initiatives were based on models that didn't actually have that much capabilities. A lot of the techniques that we have today in how we build agent and agentic systems did not exist when a lot of these initiatives started. Then I think there's also kind of a mental or a frame of mind shift, because a lot of the initial initiatives have been about how do we help our human workers basically been in two teams. How do we help our human workers be more efficient, or how do we eliminate a bunch of our human workers? I think there's certainly going to be AI in those two categories, but then I also think that there is a lot of AI or a place where you can deploy AI to do things that you could have just never done before because the marginal cost of producing a new piece of software or writing content or transcribing a call or running an ambient agent, the marginal cost of these things used to be so high, and now that it's dramatically lower, thanks to AI, you can actually unlock a bunch of use cases that have been impossible before. And I think that's where a lot of the huge opportunities are going to be. Like, when we invented Steam Engine, like, the big ROI on that wasn't from just, like, automating horses away. Right. It was, like, from all the new manufacturing capabilities that we could suddenly unlock, thanks to this new technology. But it takes time. Right? Like, you you it it it takes a little while to figure out where you can actually apply this on on net new initiatives. So now we have these LLMs out there. Everyone's excited about them. They're they're building a lot of companies are building MCPs to be able to connect their data in when those companies probably still have REST APIs that are not fully fledged out or or working properly. Is that where we're seeing a lot of this additional spend coming from? It's like, all these new MCPs, companies are focused on getting their data into these LLMs, and now people are actually interacting with them so much that that's where the the spend is increasing? Yeah. And and I think that you kinda we we've kinda hit and and you kinda talk right about, like, the jagged edge of of AI, but there's now a lot of these jagged, like, positions where we've actually hit the point where these models are extremely capable already. And then if you of let them agentically run within a system, the kind of limiting factor is no longer the actual shear capability of the model, but it's just the ability of it to access information and the ability of it to take action automatically. So then it just becomes an integration play, which like, you know, that's a a place that I've I've spent a lot of my my career at. And I can say, like, that's like these are actually kind of hard problems to solve. I think it takes a lot of effort to actually get those systems to have the right permission, access, and and and API kind of access points to actually be able to gather the information they need and take action appropriately. But that's we're probably seeing in a lot of agentic scenarios, like that is now the limiting factor on growth of the system. It's no longer the sheer capability of the models themselves. And so you have Datawiz now, is the company you have in this is focused on SLMs, not LLMs. So we're focused on specialized language model. I think if if I kind of scope this out, the the approach that we've kind of taken over the last three years of building a lot of agents, AI applications, AI workflow is that all of them are gonna end up using the same set of baseline LLMs. Right? So you're gonna have models that are just gonna keep improving and getting smarter and smarter, which has been the case so far, and and are gonna keep scaling, and then everything is built on the same models. Right? So the model that, you know, your a kid might be talking to about their homework and a doctor use is going to use to summarize their patient conversation and an engineer is going use to implement a new feature in a Java codebase, all of these are going to be the same GPT-five or Cloud four point five or Gemini free. And I think that that approach has a lot of benefit, right, because we get these models out of the box, we can integrate them into an application, and we can build stuff pretty quickly on top of them. There's definitely, in my mind, kind of a glass ceiling on how much we can scale those models, and that you get to a point where it's either the models can keep becoming more and more accurate for more and more specialized use cases. So the same baseline model is gonna struggle to be as smart, so to speak, in all the different use cases and as adaptive to all the different specific tasks that it applies for. But there's also, if you think about efficiency and cost and latency, because we're trying to adapt these models to be good at everything, they are getting larger and larger. We're talking about a lot of models that are over a trillion parameter large, and the efficiency implications of running those models at scale is just going to become harder and harder to justify over time. It's extremely expensive. It requires a lot of hardware, a lot of energy. Boom Supersonic just launched their newest product is now a turbine for powering AI data centers because our grid literally can support the amount of data centers that we need to keep scaling that. I think that there is a better way by creating specialized mission specific models that are gonna be trained for specific use cases that are ideally as narrow as possible, but then much better, and more accurate and off the shelf LLMs in those use cases. And because they're so narrow, they can be a lot smaller and thus cheaper and faster to run for those specific use cases. So you get, let's say, an AI company that's doing a sales coach. Right? So they need a an LLM that understands, like, what Bant is, what Medic is. You know? They would need to understand that, hey. We when the sales rep gets off the phone, we need check to see if they booked the following call. So all these best practices around sales. Right now, I would imagine most of these AI tools are using the standard LLMs and training them as much as possible. If there was an SLM that was focused on the sales side, let's say, and they were using that as a back end LLM or SLM, that would, in theory, save that AI company money. Yeah. And if you kind think of it logically, what you want that agent to be re or what you want that model to be really good at is sales methodology, understanding sales conversations, and generating kind of feedback and insights based on that. You don't necessarily need it to know how to code. You might not need it to know all the languages that it knows how to speak. You might not really care about how much science it knows out of the box. So there's a lot of stuff that you can prune out of the model. But then we look at a use case like that. Typically, the way you would build that is you'd get so you'd probably gonna run some STT model to convert the speech into written text. You get the transcript, and then you're probably gonna start running a bunch of different prompts on it. So one task is gonna be, please evaluate all the questions that the rep asked in the beginning of the call and give feedback on qualification. Then towards the end of the do you think that you wrapped the call nicely or not, or do you think that the rep responded well or did objection handling well? So there's a lot of different subtasks that you're going to run on the raw transcript. When we talk about specialization, each one of these subtasks can end up becoming its own model that is just extremely good at this one very narrow task. But because it's trained for this very narrow task, A, it can be much better that we can optimize and reinforce that specific behavior and not really care about how well the model generalizes outside of that, So we can kind of get very deep into this one task, and because it's a very narrow task, we can get a much smaller model that can be hundreds of millions of parameters or one to ten billion parameters rather than a model that's a trillion parameter in size because it needs to be able to do everything. So these SLMs can be thousands times smaller than an LLM? Yeah. And when you use one, like so I guess what it might if we're going back to your example of there's an SLM in place for each part of this, you know, sales coach component. Who's building them? So the I mean, that's the so and if you actually look into some of the kind of further along or most cutting edge agentic companies, and there's been a lot of blog posts about it recently, that approach of decomposing the problem into a set of specific subtasks and then training specialized models for those subtasks, that's becoming the go to approach for scaling models today or for scaling agentic systems today. So that's not something necessarily that we're doing that is net new. The issue is today you actually have to go and build all those models yourself. So you have to collect all the data, decompose it into specific subsets, go for each one of those subsets and train the model, evaluate the models that you train, deploy them into production, and then continue evaluating and retraining over time. So that's a very time consuming and resource intensive process. This is where the platform that we're building with Datawiz is powering doing that automatically. So an automated system to collect the data, decompose it, and train the the specialized models. So you you would allow a customer to come in and say, here's exactly what I needed to do. It would say, well, that needs five different SLMs, and it would help train them, and those would be small enough that they could, like, this this be you now become more vendor agnostic as an organization. Right? Yeah. You it's your models. You can run them anywhere. So, yeah, we sit between them and the LLM. Let's say they're using Anthropic. Right? So we sit between them and Claude, kind of start building an understanding of, you know, what their actual request payloads that they're sending to all these different models are, start seeing the clusters. So you typically start seeing very specific clusters, and then each one of these clusters becomes a model that we train for them. The other thing that we do, and this is something that we kind of learned from talking to a lot of companies that were not successful with rolling out their own specialized models, is I think the old school way of doing it was you collect a bunch of data, you run a training, you run evaluation, you get to a model that you like, you put it in production and it's done. Game over. That's kind of the old school way, and it didn't really work that well because the systems, the prompts, the data structures change so frequently now because these applications are evolving that a model that works really well today, that is specialized, might not be very good three to six months down the road. So it turns out these models that you train have a shelf life. So then we've seen a lot of companies that spend a lot of money and time on a fine tuning effort to get a model, and then one of two things happen. Either the use case changed too much and then the model doesn't age well, or their model is really good and then GPT-five comes out and now it's trash in comparison. So this is why our approach is it's not just a point in time thing, but you actually need to continuously run that loop. Observe in production, collect more feedback signals, retrain, deploy. Collect, observe, retrain, deploy. And that's something that has to run, like, almost on a weekly basis to keep the models up to date. I'm curious. You know, when it comes to your ICP here, you probably have you know exactly who you're going after. Do you find that the conversations that you have with them are this level of of in-depth? Or how if there's someone is or do you have to more like, zoom it up more to be like, look. Here's the analogy of what it's like. So we're, like, nine months into this. I think we're still figuring out the right point for us to go in. So we've had a lot of companies that like, I I kinda say, like, the the kind of two extremes of the spectrum, and we've seen everything in between. On the one end, you have companies that are, you know, hey. We built this thing. Basically, it's a set of prompts, we're just sending them to OpenAI. We don't have any evals. We don't really have a good measurement. Maybe we have some good and bad examples, but we don't really have a good measurement of how well it's working. But now this thing has exploded. Now it's working really well, and we want to actually get We're getting some complaints about accuracy, so we want to get the models to work better, or we have a huge cost or latency issue and we want to drop that down. So that's one end of the spectrum, And then we typically deploy more hands on, actually help them understand how to measure those models, set up the evaluations, set up a lot of the observability around model performance, and then the actual training processes are downstream of that. And then on the other extreme end, we have companies that already have teams that have already set that up. So they're kinda doing that loop of collect data, like improve the models, deploy them, and then observe in production, but they're doing it all manually today and basically looking to our platform to kind of help automate that process and take a like, if if it used to take them in the past a month or two to close that cycle, now it's something that they can do in a matter of hours or days. So kind of two different extreme ends of the spectrum there. Yeah. Okay. So let's say SLAs get widely picked up. Datawizz is out there powering all these. You're in the middle between the LLMs. You're figuring out all these prompts that they're sending through. You're building these SLAs, and you are or SLMs. I'm sorry. And you are now selling them out to people who have similar use cases. They're deploying them in their own environments, mobile, wherever, because they're so much smaller. What does the world look like in twenty thirty if this gets, like, adopted? So so I think what you probably end up looking at it by, you know, twenty thirty is you kind of have a bifurcation of kind of where tokens flow. You probably end up having forty to fifty percent of tokens still flowing to large general purpose models. Right? And these are going to be either net new applications or edge cases in more built out applications or really broad use cases where it's hard to specialize the models. So you still have the big players, right? Like the OpenAI, Anthropic, Gemini, Grok powering these large models. They each probably You already even see them specializing, so they have their coding model, the codex. You have your computer use model. You have your agentic thinking reasoning model. You have your general purpose faster model. You have your real time model. So each one of those big labs probably have five or six different categories of specialized models, but they're huge models. They're kind of the catchall application, and probably forty to fifty percent of tokens kind of flow to them. Yeah. But then fifty to sixty percent of tokens probably end up flowing to specialized kind of fine tuned models that are deployed into these applications that are powering much narrower specific use cases. And I think that the opportunity for us is all these models need to be trained, evaluated, deployed, and then retrain, evaluated, deployed on a daily to weekly basis. So you're gonna see platforms like Datawiz hopefully, literally Datawiz powering those models. Okay. Future proofing Datawiz, How are you thinking about that as the founder in terms of there's a lot of companies out there that say, oh, I'm building this thing, and then I'm gonna build this AI platform. I just really hope that, you know, OpenAI doesn't just enable this as a feature. Do you believe that there's a chance that LLMs enable what you're doing in terms of building SLMs inside of them in the future? I think that there is a world where it happens. I do think that, you know, to be fair, OpenAI does have some distillation capabilities built into the your dashboard, so you could do some of these workflows inside of OpenAI. And it's probably likely that some of the other players will end up looking into some of that, and I think they've put out really great platforms as well. I do think that you look at a lot of the way that people are architecting their AI solutions, and there is a desire to be kind of model agnostic or recognizing think it's something that you opened with. Every day, you see a new model that is better. You start seeing different models that are better at very specific use cases. Now, if you're collecting all your data, doing all your training, if the more that you buy into a specific platform, you're making a very long term bet on that LLM provider, where I think that people to be to some extent agnostic of that. I think a good analogy is you have cloud providers, and a lot of what the big cloud hyperscalers are trying to do is provide more and more layers of abstraction of the cloud that lock you into the cloud system. But then a lot of the more modern organization end up creating their own abstractions. So you want to run things that are going to be cloud agnostic to get mobility or to have the ability to play the multiple cloud vendors against each other or combine them in unique ways. And I think that that's how people are gonna end up thinking about LLMs long term. Alright. Ito Gino, CEO and founder of Datawiz. You got a twelve and a half million dollar seed round Yeah. For this? Let's go, man. Good luck. Love it. Thank you for coming by today. Yeah. Thanks for having me. Alright.

Are Specialized Language Models the Future of AI? [Ft. Iddo Gino, Datawizz]

Iddo founded his first company at 17, scaled it to unicorn status by 24, and spent nearly a decade in the API integration space. His position is straightforward: trillion-parameter models optimized to do everything are expensive, slow, and ill-suited for the narrow, repeatable tasks that make up the majority of production AI workloads. The companies gaining ground are decomposing their systems into specialized models — each trained for one specific task, orders of magnitude smaller, and meaningfully more accurate than any general-purpose model in that lane. He also gets specific about why most fine-tuning efforts quietly fail, why model capability is no longer what's slowing agentic systems down, and what the market actually looks like by 2030 when this plays out.

Topics discussed:

- $8B in enterprise LLM API spend in H1 2025 and what's actually driving it

- Decomposing agentic systems into narrow subtasks vs. single general-purpose model approaches

- Why fine-tuned models have a shelf life and the case for continuous weekly retraining cycles

- The integration and data access layer as the real production bottleneck in agentic systems

- MIT study: 90% enterprise AI initiative failure rate and what separates the 10% that work

- Iddo's 2030 prediction: 50-60% of tokens flowing to specialized models, not large labs

- Model agnosticism as a structural hedge against LLM provider lock-in

Datawizz helps enterprises reduce AI inference costs by replacing general-purpose LLMs with specialized language models trained for specific production tasks. Rather than routing every workload to the same trillion-parameter model, Datawizz sits between a company and its LLM provider, identifies clusters of repeatable requests, and automatically trains dedicated models for each one. More technically, the platform handles the full loop: data collection, task decomposition, model training, evaluation, and continuous retraining on a near-weekly cadence to account for model degradation as prompts and data structures evolve. The result is models that are orders of magnitude smaller than general-purpose LLMs, faster to run, cheaper to operate, and more accurate within their specific domain than any off-the-shelf foundation model can be.

For other founders of early stage companies, especially young, any lessons learned that you'd like to give them? Can talk a lot about what not to do, probably more than than what to do, but I think it's find something that you're really excited about building and a product that you really enjoy working on. I feel like if you don't enjoy the baseline product that you're working on, it kinda can get very, very difficult at times.

Of the cool things actually with AI is you look at a lot of the companies, and because the space is so new, I think it gave a lot of founders kinda license. There isn't really a huge talent pool of, like, seasoned AI executives to pull from. Right? Like, if you're building Cursor, like, it's not like there is a huge pool of people who have built AI coding agents before. So I think that it kinda gave a lot of people license to, like, not go that route and actually experiment with just, you know, what if we bring a lot of young, ambitious people who are kinda figuring it out for the first time, but, like, guess what? Every startup is different, so everybody's figuring it out for the first time.

I dropped out of school to start RapidAPI, and I think that we, back then, had the thought that there's a lot more that you can learn by doing than by, you know, sitting in a classroom. And I think AI is kind of proving that in a lot of ways.

One of the recent stats I read was, like, the amount of dollars spent on APIs in the first half of twenty twenty five was, like, eight billion dollars. And so that's for the first half of twenty twenty five, and that is double what the entire twenty twenty four was. What's driving this? There's obviously a huge kind of drive to go and apply AI everywhere, and I think that AI took every company out there and put them in this, You're under threat. There is a good chance that in ten years your business is either going to radically change or entirely gone. And I think that that's actually been one of the biggest driver for innovation. So now people are basically realizing it's you take that technology and apply it everywhere or you end up dying. And that's driving a huge amount of spend.

And so you have Datawiz now. It's focused on SLMs, not LLMs. So we're we're focused on specialized language model. Think if if I kinda scope this out, the the approach that we've kinda taken over the last three years of building a lot of agents, AI applications, AI workflow is that all of them are gonna end up using the same set of baseline LLMs. Right? So you're gonna have models that are just gonna keep improving and getting smarter and smarter, which has been the case so far, and and are gonna keep scaling, and then everything is built on the same models. Right? So the model that, a kid might be talking to about their homework and a doctor is going use to summarize their patient conversation and an engineer is going to use to implement a new feature in a Java codebase, all of these are going to be the same GPT-five or Cloud four point five or Gemini free. And I think that that approach has a lot of benefit, right, because we get these models out of the box, we can integrate them into an application, and we can build stuff pretty quickly on top of them. There's definitely, in my mind, kind of a glass ceiling on how much we can scale those models, and that you you get to a point where it's either the models can keep becoming more and more accurate for more and more specialized use cases. So the same baseline model is gonna kinda struggle to be as smart, so to speak, in all the different use cases and as adaptive to all the different specific tasks that it applies for. But there's also, if you think about efficiency and cost and latency, because we're trying to adapt these models to be good at everything, they are getting larger and larger. Now we're talking about a lot of models that are over a trillion parameter large, and the efficiency implications of running those models at scale is just going to become harder and harder to justify over time.

Actually look into some of the of further along or most cutting edge agentic companies, and there's been a lot of blog posts about it recently, that approach of decomposing the problem into a set of specific subtasks and then training specialized models for those subtasks, that's becoming the go to approach for scaling models today or for scaling agentic systems today. So that's not something necessarily that we're doing that is net new. The issue is today you actually have to go and build all those models yourself. So you have to collect all the data, decompose it into specific subsets, go for each one of these subsets and train the model, evaluate the models that you train, deploy them into production, and then continue evaluating and retraining over time. So that's a very time consuming and resource intensive process. This is where the the platform that we're building with Datawiz is powering doing that automatically, so an automated system to collect the data, decompose it, and train specialized models.

What does the world look like in twenty thirty if this gets, like, adopted? What you probably end up looking at it by, you know, twenty thirty is you have a bifurcation of of of kind of where tokens flow. You probably end up having forty to fifty percent of tokens still flowing to large general purpose models. These are going to be either net new applications or edge cases in more built out applications or really broad use cases where it's hard to specialize the models, you still have the big players, like the OpenAI, Anthropic, Gemini, Grok, powering these large models, and you already even see them specializing. They have their coding model, the codex. You have your computer use model. You have your agentic thinking reasoning model. You have your general purpose kind of faster model. You have your real time model. So each one of those big labs probably have like five or six different categories of specialized models, but they're huge models. They're kind of the catchall application, and probably forty to fifty percent of tokens kinda flow to them. Yeah. But then fifty to sixty percent of tokens probably end up flowing to specialized kind of fine tuned models that are deployed in these into these applications that are powering much narrower specific use cases. And I think that the opportunity for us is all of these models need to be trained, evaluated, deployed, and then retrain, evaluated, deployed on a daily to weekly basis. So you're going to see platforms like Datawiz hopefully, you know, literally Datawiz powering those models.

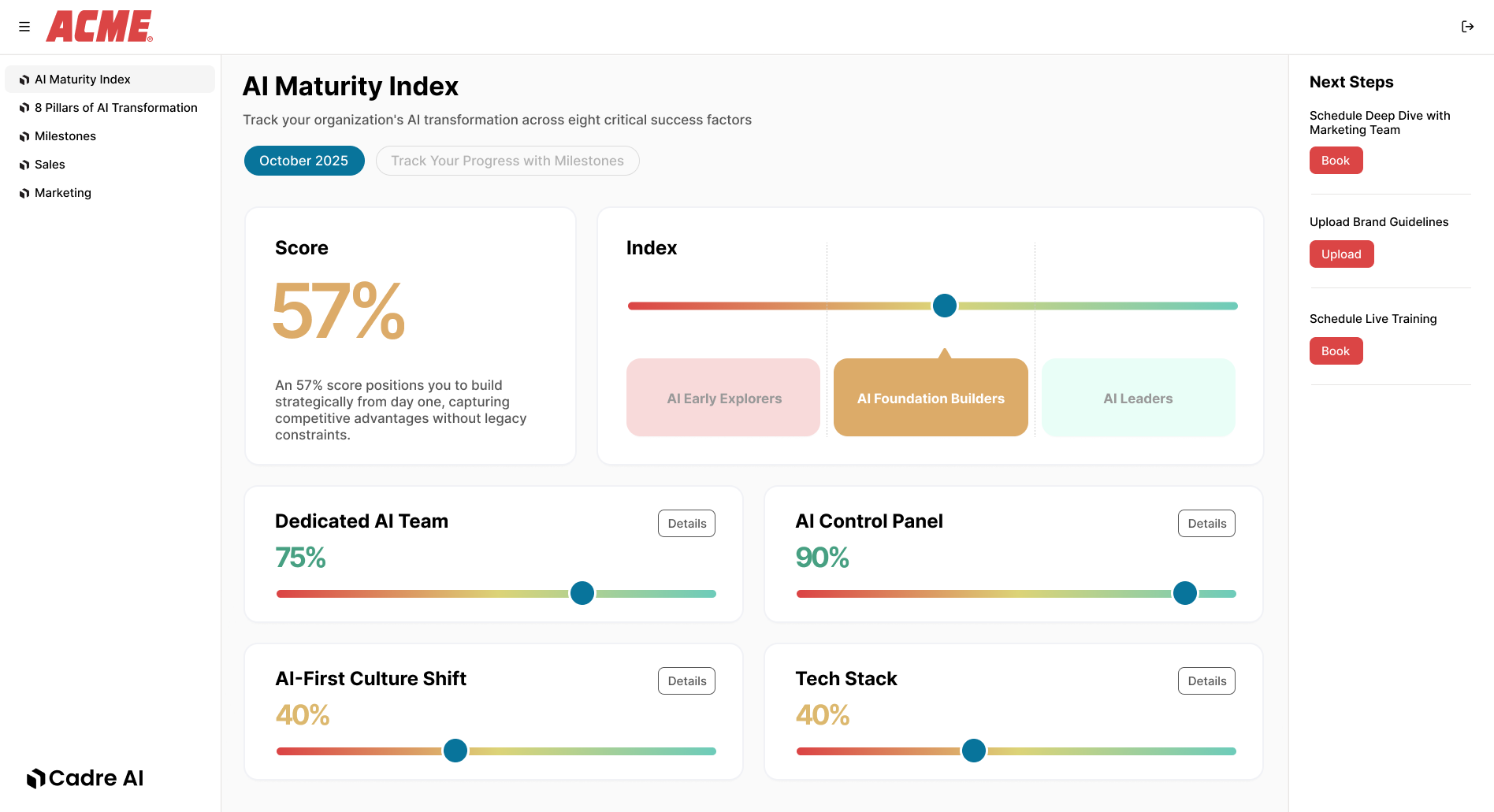

Track your AI results