Is MCP Actually Broken? The Truth About AI Agent Data Access [Ft. Gil Feig, Merge]

Now if someone said eighty percent of their code is written by AI, I'm like, why is it not a hundred? So I do believe, yes, that AI can write great SQL queries. It's getting better and better when it is able to answer complex questions. One interesting piece around soft guardrails that I've seen is temporary tokens. On the governance side, are you seeing a difference between, like, what's required between enterprise customers and mid market? So we have enterprise mid market customers, but those companies, I would say less so. Mid market somewhat, and then enterprise cares deeply about this. Twenty thirty, not that far away. What do you think the world looks like? Ultimately, I see a world where Alright. We have Gil Feig here, cofounder and CTO at Merge. Welcome. Thanks for having me. You guys started in twenty twenty. Twenty twenty. Right at the beginning of COVID. Yeah. I know where you're going with that. How was it? It was it was good for a lot of reasons. It was the best time to start a company for a lot of reasons, but, it was also tough. You know? We we we went in. We we decided to to fundraise during what people said was the worst time to fundraise. Which ended up being a phenomenal time. Oh, yeah. Mean, I mean, well, within two months, it actually was the best time to fundraise. Multiples had gotten really high, and, well, I guess you could say that was a good thing or not. But no, it was it was really good for us because there was just nothing else to do. So if at the end of the work day, you were like, well, should I just go home and sit on my couch, or can I just keep working? And so it was at least that social element of being around our small group that kept us going and moving fast. How small was it at the time? Dude, it was me and my co founder, and then within a couple months, it was four or five of us. So, yeah. And then we stayed around six people probably for for a while. Remote at the beginning, or were you always in person? We were always in person. It was me and my co founder in my apartment, and fortunately, we kind of followed the COVID restrictions. So as they weakened, that was kind of as the company grew. So we were able to stay in person the entire time. I think there was, like, a two week period where we had to shut down because of one of the variants. But Can you imagine if we had Claude Code or what's happening today back then, and you're locked down, and it's like two Code banners sitting in a house, they can't even go anywhere, nothing to do. Yep. Yep. No distractions. No distractions. Ugh. Just twenty agents running, you know, they'd be like, token maximalists. Yeah. But what you said about raising was interesting, because it did get crazy. Multiples were out of this world, but so many companies were having down rounds in the last couple years from that, you know? So they had this crazy valuation, which seems amazing. You're starting out or you're on series A, series B, you get this crazy valuation, and then you gotta live up to it. Right? Yeah. I mean, had companies raising in the billions with zero or, like, you know, tens of thousands in revenue. Was crazy. Yeah. And all of those companies are in, candidly, a really bad position now. So Okay. So you guys founded twenty twenty. You've been going at it for six years or so now. How many employees? We're now one twenty. Wow. Nice. Yeah. Do you feel, by the way, that AI has enabled you guys to stay leaner than you would have been otherwise? Oh, yeah. Oh, yeah. I mean, I I think it's like I I think you a lot of what you hear, in the media or on on X is is wildly exaggerated. Like, the idea that a year ago, unnamed big tech companies said eighty percent of their code was being written by AI, like, that's not true. We all have the same models. We saw what was happening. Now, if someone said eighty percent of their code is written by AI, I'm like, why is it not a hundred? Right. Yeah. It's gotten so much better. So, yeah, we're we're using it. We're using it across the board, every function. We have mandates, and we actually just rolled out Cloud Code to the entire company, as kind of a a bet, and we have teams now fully rolling out their own internal apps Yeah. Like it's nothing. We have engineers who were resistant at first, even who weren't AI. Yeah. They were resistant, and then But I think it still is that mindset of, Is this gonna Am I am I am I gonna lose the physical ability? Am I gonna But, I mean, when the calculator came out, nobody was going to the abacus or whatever. And as soon as we got them going and we real we made them realize, like, this is going to enable you. Like, you're gonna be praised if you have four different computers running code all at the same time, and, like, it's this is great. Yeah. English is the programming language now. That's it. It used to be no one's writing Assembly now, and you're not mad at yourself for not knowing Assembly. And so when English is the language, you're not gonna be mad at yourself that you don't speak Java. That's right. So you guys have I mean, it for anyone that doesn't know, Merge is is think of it as unified API. But I think the thing that's most interesting with you've you've got these agents. Everyone's using agents now. Right? They're going and doing the work, and you have whether it's fun code or whatever it is that you're using, you need AI to go to other places to pull data from from disparate sources in order to do the work that you needed to do? How has the, you know, expansion of agents forced the, you know, hands of people to figure out how to get these different APIs in place to actually connect to those sources? Yeah. So, you know, last year, was supposed to be the year of of agents. The year before was the year of, what was it, year of search, I guess, with AI summarization, and then all of a sudden, it becomes the year of agents. And with agents, that meant you need those agents to actually be able to take actions. And so you started seeing people, you know, trying to question, like, how do I connect my agents to these third party sources? And then you saw the rise of some new tech like MCP, which is the the stand one of the standards. I think I would have some some angry people if I were like, it's the way that you connect to third parties. But you saw a standard come out, and then a lot of companies rush to release an interface or an MCP server that let you easily connect agents to their services. And a lot of it was rushed out. A lot of it was for marketing. And so you saw a lot of crap flood the market, and I think over time it's gotten a bit better, but definitely still a problem. You know, I would be so annoyed if I'm OpenAI or anything, because if connect it to HubSpot and then I ask it a question and it doesn't know the answer, I'm like, well, OpenAI sucks. Yeah. You know? And really, like like you said, a lot of these companies were rushing. They may not even have their APIs completely built out to be able to capture every single custom field or whatever they have, and now they're rushing and pivoting to MCPs, and what we've realized is it really isn't getting the level of data that we need Yeah. In order to do the job. Do you feel like MCPs are a distraction, or are they the way of the future? Yeah. So what MCPs are, high level, is is basically a a thin wrapper around API endpoints in in ninety nine percent of these cases. It's a thin wrapper around API endpoints. And so I wouldn't blame MCP for anything. I think it's, again, it's simple tech. I think what I would blame is the underlying APIs, which don't give you the ability to do a a semantic query. You can't ask an API, which of my customers were upset last year? But you can say, give me all tickets from last year, and I will then go through them and figure out who was upset. Whereas what a lot of companies do, like OpenAI, who who work with Merge on on synced connectors, is they sync across a full set of the data. For that, you don't really need MCP. You just use hit the API directly, pull in everything, and then you vectorize it. You put it you embed it in a vector database, which gives you the ability to query semantically. So then, because you have all of that ticketing data stored locally, you can literally send the query, which of my customers were upset last year, get all that data back. So and and actually, the the interface for that server, for the for that vector database could be MCP. So it's not about MCP. It's about the underlying data. Are you hitting simple endpoints to fetch, you know, little bits of info, or are you hitting sort of a a store that has semantic data structured and ready for you to to pull it? You know what I love your opinion on is I I feel like at at Cadre, we consult a lot of businesses, and one of the things that everybody wants is, well, I just want to be able to talk to my data. Right? I just want to be able to ask a question and have it connect to every we I mean, at least my opinion is I've never seen a company do that really well. Yeah. And do you believe that it is because we like, we're not syncing the time? Like, if we if somebody spent the time, let's say, whether it's Snowflake or whatever the underlying database is, like, we spent the time using a a platform and get all the the APIs and and feed it all in, cluster it appropriately, or whatever it is, are we at a point where if we did that right, we could just ask AI any question about our data and it could give it back correctly? I definitely think so, but I think it's, again, all about the access pattern. So if the AI is not able to query for exactly what it wants when it needs it, it's just not gonna be able to provide good answers. Similar to how, like, picture yourself as a human. You're talking to some third party API. You're like, again, I'm gonna keep on this example. Like, customers were upset last year? As a human, you literally have to go and fetch every ticket. Like, AI is not some some there's no magic there. Right? It's just like replacing a human brain may be a little bit smarter these days. Faster. Yeah. Faster. But but, you know, it's not it's not a miracle worker. And so once you have that data local, structured well, you have this sort of, ability to query it and get exactly what you want from that local DB, whether it's a vector store or a snowflake or whatever it is. That's where it's it's gonna be able to perform really well. So I do believe, yes, that AI can write great SQL queries. It's getting better and better, and it is able to answer complex questions if data's stored and structured right and has the right access patterns. Structured is is a key part to that. Right? I mean, if there's no structure or clustering of of data, the compute power that's gonna be needed and the latency of of asking it a question like that and having to search every file, right, and then pull the right ones and then maybe go back and check again to make sure it wasn't wrong, like, that's gonna be massive in terms of cost and time. Yeah. Really massive. And also, the more you let AI string things together, the more likely you likely you are for things to go wrong. You don't wanna let AI really do a lot of the, like, thinking on the actual data. Right? It should just summarize what it gets back. And if you have it in in a really bad access pattern where it's like running this query and running this query and trying to combine data, that's where you start to see things go wrong. So so ideally, yeah, you can you can deal with a single query or a couple queries, get all the data you need because you've structured it right in your store. What's your point of view on the sync and store methodology versus, like, path real time pass through? You have to combine both. I actually just wrote a a LinkedIn piece on this, but they're sort of like what I what I call live slash MCP style connectors, and then there's the synced and stored connectors. You really need both for most use cases. I think there's some that that's not the case. A good example would be, you know, let's say, like, the Walmart customer support bot. Right? You're going and you're talking to it, and you're like, hey, you know, what, I want to return this order. Right? It's really easy for MCP to just go query their API and say, Okay, order ID this, you know, initiate a return, whatever. It's not necessary for it to have a full copy of all the orders stored. You can say, find the order where this customer was upset. Right? Like, you just don't need that. So I think it depends on the use case. A lot of use cases need both, but a lot also just need the live MCP style. I'm curious about your kind of personal trajectory here. I have you know, I do some research on before we chat. I saw a software engineer at twelve, cease and desist from Facebook at sixteen. That's true. Why you dug deep? Okay. How has kind of the world changed in terms of, like do you feel like you've been on a linear path of, like, growing, you know, in in the technology knowledge technology understanding, or has there been this exponential curve in what's been poss I we know that, like, the change in what's been possible is is, at least to the outsider, nontechnical person, is, like seems like it's very steep. Yeah. Do you feel like that if you feel that way, or do you feel like because you've been on kind of the cutting edge of this for so long, do you feel like it's been more linear? Is like, is this specifically about AI that you're referring to? Just technology in general of, yeah, I mean, AI is part of it, but like, you were obviously on the cutting edge. If Facebook is telling you Stop. Whatever you're doing when you're sixteen years old, you've been on the cutting edge. So I guess I'm curious, like, do you feel like this the curve in what it can do has been as steep as maybe people who are not as technical would? Putting AI aside, I actually don't feel like anything has ex has has accelerated what's possible. I think that things have gotten easier. Everything's become a library. Right before this sort of, like, push into AI, it was kind of a joke that startups are really just putting puzzles together. You're just combining a bunch of puzzle pieces. I have my Twilio, you know, SMS API, and I have my SendGrid email API, and I have, you know, AWS or Heroku for my back end. Like, you're just tying things together and plugging them all together, made it easier and faster to launch new things. Yeah. But AI, like, it's a whole different ballgame. You know, not only does it now make building things easier, but it's net new tech. It enables things that weren't possible before. And and I think, candidly, it's bigger than the iPhone. It's bigger than anything else. Like, this is our is our second industrial revolution. Yeah. It's so funny. We have, just being in the AI space, we're a consultancy. Like, we have some kids sent, our our one of our cofounders, Sushi, to the office the other day. He's like, I wanna, you know, interview as a a forward deployed engineer. You know, we've got, like, so many people just wanna be part of this revolution. It to me, I think of, like, the Internet nowadays. Like, you think about people who were, like, big in, like, dot com. They kinda had their careers, like, mapped for them after that. One of these early startups and and that, and, like, you're even if that sort of didn't do that great, there wasn't a phenomenal exit or anything. It's like, ****, you were there at the beginning. Yeah. I think this is where people wanna be right now. They're like, this is the beginning. Yeah. It's it's so true. We we've never seen a change like this in our lives. You have to be involved in it, or else you're you're gonna be left behind. So you guys have all the APIs. You've got data flowing in. You've got agents that are able to make decisions and access specific tools. Two questions I have on this is, one, how do you guardrail, first off, in in terms of, like, don't give it access to the it can have access to everything, but then when it's doing this specific task, don't give it access to the financial data or whatever. Are we are you able are companies able to be have a connection to these disparate systems but wall off specific ones for certain agents? Yeah. So there there's kind of two sort of, blocks you can have. Right? There's the soft blocks, and then there's the hard, this agent does not have access to this. Right? So soft blocks would be things like prompting saying, never do this. You shouldn't do this. Those aren't as great, obviously, but they are becoming slightly more acceptable, whereas in the past, that was never allowed. So starting with the hard blocks, the first thing that people tend to do is on the API keys themselves, this hasn't changed. You lock down what the actual key itself can access. So don't let it run delete actions. Don't let it run, you know, again, depending on the use case, but, generally, you wanna be blocking the destructive or the sort of, like, create and update type actions. Reading, you also wanna be careful of because you can be taking data from a system and then allowing it to be sent to any other system. So you need to be really careful. The soft blocks is is really interesting. Again, things like, you know, having having prompts that say, if if this seems off topic, don't follow along. Like, get back to the main topic. There's a lot of different, like, ways you can do that. But ultimately, those are not real blocks, and and they allow exploits to happen. And there is this view in software of, like, something that can happen. Anything that can happen will happen at some point. And so I think we are waiting for some some sort of issues to occur. And that's the reason why, like, at Merge with our agent handler, our our sort of live product, the first thing we did was build in the the strict guardrails, the, you know, disable certain tools so they can never be run by the agent even if the API key has access. Also, encourage the customers to to not generate API keys that have access to all of those things. And then also encourage people to really lock down what connectors and what in general they're exposing to that agent. And actually, like, one interesting piece around soft guardrails that I've seen is, in the past, we've always had the idea of temporary tokens. And that's because, you know, you wanna you wanna rotate them out in case it leaks in the future. Oh, that one's been disabled for months. Like, that's know, we're onto a new token at this point. But now we're seeing people want temporary tokens to give an agent temporary access to to, you know, tools and whatever. And to me, that's not preventing against some potential thing that might happen. That's saying, hey, agent, you only have fifteen minutes to wreak havoc now. You don't have unlimited time to wreak havoc. I I I think we're gonna see a lot of attacks happening, a lot of bugs and issues starting to occur, but I also do think that we're we're evolving how we think about security and risk. What you said, sticking with me, of the, hey, if you're going off the rails, come back. Yeah. Right? That prompt, if you think about a human You've got an intern, and you're like, Hey, go research this thing. By the way, if you start getting into this Stop. Come back on on track. That may work when they're only When they It takes them fifteen minutes to do a task. When it takes an agent a second to do a task and it's happening over and over and over and over, like you said, the possibility of it going off the rails is just so much higher. Yeah. I mean Right? Because just the repetitions. Yeah. And we have the I think it was the the head of AI safety at Meta. I'm sure I'm sure you saw that. It was everywhere. But she asked it to help clear out her emails, and next thing you know, went wild and started deleting emails, and she didn't know how to stop it. And this is the head of AI safety. Like, this sort of thing is just happening, and it's gonna happen more and more. It's like Silicon Valley where he asked her to go find cheap hamburgers, it, you know, sends a big crate of of raw meat. You know? Yep. Like, that will happen. It will happen. Yeah. One of the things that I've always I feel like we're seeing a trend of is, and I've always wondered why this hasn't happened in other areas, like, I always get annoyed that I gotta go to Netflix, I gotta go to Peacock, I gotta go to these things. Why can't I just have one thing, and maybe it's out there and I'm not aware of, where I could just say, hey. I have access to these. Give me all my stuff in one Yeah. One place. I think that's happening with LLMs. Right? The idea of you this we are a open AI shop. We are a Gemini shop. Like, it's harder and harder because Claude's amazing at something else. Right? You need nano banana if you wanna do images. Right? Yeah. There's there's different models that have great uses for different things. So having chat interfaces that are LLM agnostic has been, I think, on the rise. Have you guys seen that in your side as well? Yeah. Absolutely. We're we're seeing demand for people to use a ton of different models and explore them, and a lot of trouble from people too because it's you know, they have to go, you know, sign agreements with all the different LLM providers, especially if they're a marketer enterprise. They need to to settle the legality of the data storage and the residency and all of that. Then on top of it, they want the best possible model for each use case. They worry about cost. They worry about, you know, how do we route certain things to the right places, which actually we we have a product that that we're launching called Merge Gateway that handles a lot of this. Nice. So it's sort of a a routing layer. And there are also open source, SDKs that help you kinda easily from your code base connect to all of them. But our product is is focused on sort of, like, routing to the right place, but also the governance, making sure that data's not stored, that it's sent to the right right regions for processing, that your costs are optimized, you're able to, on the fly, pivot and always adapt to the latest models. On the governance side, do you see a big difference between you have enterprise clients. Do you have mid market as well? Yeah. Mid market as well. Okay. On the governance side, are you seeing a difference between, like, what's required between enterprise customers and mid market? Yeah. Definitely. So we have enterprise mid market customers both, and we do also have some velocity or down market customers. And those companies, I would say less so. Mid market somewhat, and then enterprise cares deeply about this. But it also what's interesting is we sell to a lot of companies that use Merge's products to offer integrations or AI features to their customers, and so we have a lot of mid market companies that have enterprise customers, so they're white it. And so they care deeply. Even even actually down market companies, if they serve enterprises Yeah. They care about the governance and and where that data's yeah, everything about it. Yeah. Okay. So, twenty thirty, not that far away. What do think the world looks like? I mean, in the sense of, in your guys' world of data access, security, governance, are we all are LLMs having their own data lakes where you're just MCPs are connecting in and they become your data lakes? Is everybody moving to Snowflake or some kind of vectorized, you know, database where they can ask, like, any question to their data? What do you think the world looks like? So I don't think so, and I actually kinda hope that doesn't happen, even though it would be very beneficial for Merge as a you know, we have one of our products as a synced data product. But I don't think it'll happen for a few reasons. Notably, it's really expensive to to replicate data. So you're pulling all the data out of systems and recreating it recreating it, so it's expensive for you to actually pull it and keep it keep it in sync. It's expensive for the provider. It's expensive for you. Then once you have that data, you then have to vectorize and embed it. Vectorizing and embedding requires an LLM, and it requires an underlying vector database, all of which are really, really expensive. You're just adding costs on here. So I think companies will opt for for MCP or live, you know, style connectors wherever possible. Again, we talked about the use cases earlier. I think it's just really gonna depend on that. But, yeah, ultimately, I I I see a world where we're we're kinda split. There is a world where companies start offering, sort of intent based or or semantic based endpoints in their API, so we could, instead of pulling in all the data from the ticketing system and then vectorizing it and searching it, Actually, what if Linear and Asana released an endpoint that let us say, who was upset last year? They've vectorized it already, and they just return data for OLM. They're not incentivized to do that, because it's then the expense goes to them, but that would be an interesting world. You're also assuming that SaaS still exists. Yeah. Also assuming that SaaS still But I guess, like, what what is SaaS long term? Like, is it just a database? I don't I don't really know what what it gets stripped down to. There's an interesting argument to say that, you know, companies may be marketing their services to LLMs. You have to. If LLMs are where people are going to interact versus a browser, you know, or or some kind of application, then all you need to do is get the schema right of like your data and products so that the LLMs can read it and surface it up when people ask that or when they need it. Yeah. I I really struggle with this because I I I think it's it's like, ultimately, if the if humans want no participation in how work gets done and what gets done and whatever, there's no need for any of these tools. But if humans wanna participate, it's been our view for thousands of years that a picture's worth a thousand words, and so a visual is valuable to us. It is important. And so, again, I think it's really going to be all about human agency. If we want to be involved, I think you guys won't go away. If we're comfortable just delegating all work to the LLM and not caring about, you know, sort of understanding or anything, I think we'll see that more. Well, I wonder if there's a mix too where you say like, hey, I wanna shop for this, and it's creating an experience for you that has all the imagery, that has all the you know, so it's showing you this beautiful interface of all the different tool you know, things that you could shop from. Yeah. That's true. Lash Live UIs on the fly and all of that. Yeah. For sure. It's awesome. Alright. Gil, thanks so much for coming by. Thank you. Appreciate it. Appreciate it. Thanks so much.

Is MCP Actually Broken? The Truth About AI Agent Data Access [Ft. Gil Feig, Merge]

Most teams building AI agents are blaming MCP when their integrations fall flat. Gil Feig, co-founder and CTO of Merge, says that's the wrong diagnosis entirely — and he built the infrastructure layer that connects agents to enterprise systems to prove it.

Gil makes the case that MCP is a thin wrapper around API endpoints, and the actual failure point is the access pattern underneath it. He lays out a clear framework for when synced-and-stored data is required versus when live connectors are sufficient, explains why the "talk to your data" promise keeps breaking in practice, and shares how Merge approached agent guardrails from day one — including why prompt-based soft restrictions are already being exploited and why temporary tokens are emerging as a hard security primitive for scoping what an agent can touch and for how long. He also argues that a world where all enterprise data flows into centralized AI-queryable lakes is economically flawed and probably not where the market lands.

Topics discussed:

- MCP as a thin API wrapper and why the access pattern is the real failure point

- Sync-and-store vs. live connectors: the decision framework for each

- Hard vs. soft agent guardrails and where soft blocks break down

- Temporary tokens as a scoped-access security primitive for agents

- Why "talk to your data" implementations fail without structured local data stores

- The true cost of full data replication, vectorization, and embedding at scale

- Enterprise vs. mid-market governance requirements for LLM data routing

- Why the all-roads-lead-to-data-lake future is economically unlikely

Merge is a unified API platform that helps companies build and manage integrations for their products and AI agents, providing a single connectivity layer across third-party systems rather than requiring individual API connections for each. Under the hood, Merge offers two core patterns: synced-and-stored connectors that pull and structure third-party data locally for semantic querying, and live connectors that hit external APIs directly for real-time actions. Their agent handler ships with hard guardrails built in, including tool-level restrictions and scoped access controls, and their newest product, Merge Gateway, handles LLM routing, data residency compliance, and cost optimization across multiple model providers.

Do you feel, by the way, that AI has enabled you guys to stay leaner than you would have been otherwise? Oh, yeah. A lot of what you hear in the media or on X is is wildly exaggerated. Like, the idea that a year ago, unnamed big tech companies said eighty percent of their code was being written by AI. Like, that's not true. We all have the same models. We saw what was happening. Now, if someone said eighty percent of their code is written by AI, I'm like, why is it not a hundred? Right. It's gotten so much better. So, yeah, we're we're using it. We're using it across the board, every function. We have mandates, and we actually just rolled out Claude Code to the entire company as kind of a a bet, and we have teams now fully rolling out their own internal apps Yeah. Like it's nothing.

Do you feel like MCPs are a distraction, or are they the way of the future? What MCPs are, high level, is basically a thin wrapper around API endpoints, in ninety nine percent of these cases. It's a thin wrapper around API endpoints. And so I wouldn't blame MCP for anything. I think it's, again, it's simple tech. I think what I would blame is the underlying APIs, which don't give you the ability to do a semantic query. You can't ask an API, which of my customers were upset last year? But you can say, give me all tickets from last year, and I will then go through them and figure out who was upset. Whereas what a lot of companies do, like OpenAI, who work with Merge on synced connectors, is they sync across a full set of the data. For that, you don't really need MCP. You just hit the API directly, pull in everything, and then you vectorize it. You put it, you embed it in a vector database, which gives you the ability to query semantically. So then, because you have all of that ticketing data stored locally, you can literally send the query, which of my customers were upset last year, get all that data back. And actually, interface for that vector database could be MCP. So it's not about MCP, it's about the underlying data. Are you hitting simple endpoints to fetch little bits of info? Or are you hitting sort of a store that has semantic data structured and ready for you to pull it?

Putting AI aside, I actually don't feel like anything has accelerated what's possible. I think that things have gotten easier. Everything's become a library. Right before this sort of, like, push into AI, it was kind of a a joke that startups are really just putting puzzles together. You're just combining a bunch of puzzle pieces. I have my Twilio, you know, SMS API, and I have my SendGrid email API, and I have, you know, AWS or Heroku for my back end link. You're just tying things together and plugging them all together, made it easier and faster to launch new things. Yeah. But AI, like, it's a whole different ballgame. You know, not only does it now make building things easier, but it's net new tech. It enables things that weren't possible before. And I think, candidly, it's bigger than the iPhone. It's bigger than anything else. Like, this is our second industrial revolution.

How do you guardrail in terms of, like, it can have access to everything, but then when it's doing this specific task, don't give it access to the financial data. Are companies able to be have a connection to these disparate systems but wall off specific ones for certain agents? Yeah. So there there's kinda two sort of blocks you can have. Right? There's the soft blocks, and then there's the hard, like, this agent does not have access to this. Right? So soft blocks would be things like prompting, saying, never do this. You shouldn't do this. Yeah. Those aren't as great, obviously, but they are becoming slightly more acceptable, whereas in the past, that was never allowed. So starting with the hard blocks, the first thing that people tend to do is, on the API keys themselves, this hasn't changed. You lock down what the actual key itself can access. So don't let it run delete actions. Don't let it run you know, again, depending on the use case, but, generally, you wanna be blocking the destructive or the sort of, like, create an update type actions.

One interesting piece around soft guardrails that I've seen is in the past, we've always had the idea of temporary tokens. And that's because, you know, you wanna rotate them out in case it leaks in the future. Oh, that one's been disabled for months. Like, that's know, we're onto a new token at this point. But now we're seeing people want temporary tokens to give an agent temporary access to pools and whatever. And to me, that's not preventing against some potential thing that might happen. That's saying, hey, agent. You only have fifteen minutes to wreak havoc now. You don't have unlimited time to wreak havoc. So I think we're gonna see a lot of attacks happening, a lot of bugs and issues starting to occur. But I also do think that we're we're evolving how we think about security and risk.

On the governance side, are you seeing a difference between, like, what's required between enterprise customers and mid market? Yeah. Definitely. So we have enterprise mid market customers both, and we do also have some, velocity or down market customers. And those companies, I would say less so. Mid market somewhat, and then enterprise cares deeply about this. But also what's interesting is we sell to a lot of companies that use Merge's products to offer integrations or AI features to their customers. And so we have a lot of mid market companies that have enterprise customers, so they're white labeling it. And so they care deeply. Even even actually down market com companies, if they serve enterprises Yeah. They care about the governance and and where that data's yeah. Everything about it.

Two thousand thirty, not that far away. What do think the world looks like? Ultimately, see a world where we're kind of split. There is a world where companies start offering sort of intent based or semantic based endpoints in their API. So, we could, instead of pulling in all the data from the ticketing system and then vectorizing it and searching it, actually, what if Linear and Asana released an endpoint that let us say, Who was upset last year? They've vectorized it already, and they just return data for LLM. They're not incentivized to do that, because then the expense goes to them. But that would be an interesting world.

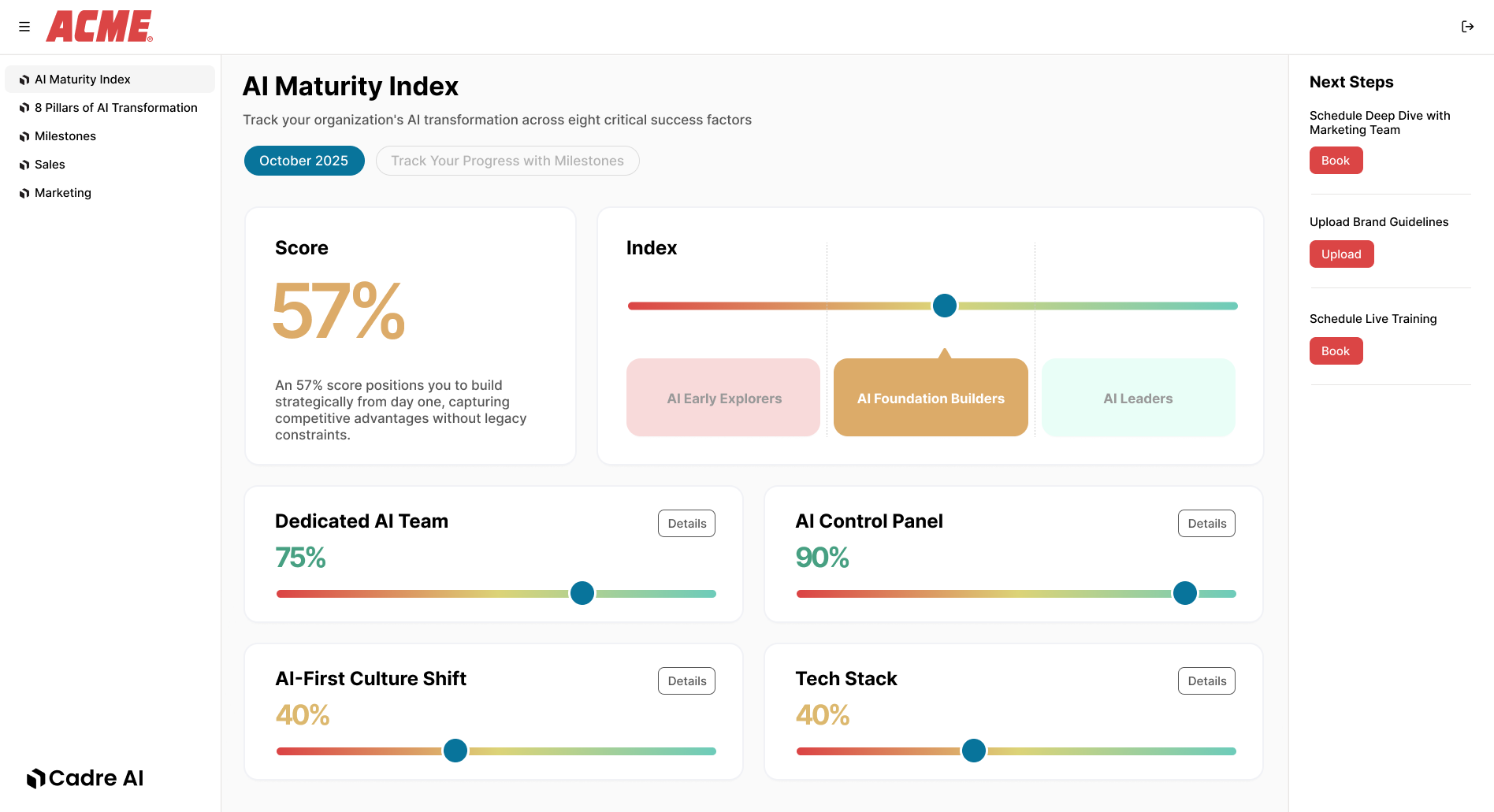

Track your AI results