Is Physical AI the Next Frontier for Enterprise? [Ft. Sud Bhatija, Spot AI]

Physical AI is the application of AI to real world settings. The big difference between physical and digital AI is that in order to be able operate in a physical space, need to be able to see. Face recognition existed well before LLMs. What happened after LLMs was customers started realizing that AI and video AI can do a lot for them. And what we found is that the organizations that are the most successful at applying AI and deploying it and actually getting option are those that look at it in a positive way to train their people and help them be better at what they do. Speaking of future, it's twenty thirty. Where do you think it's going? You're gonna see a lot more. Alright. We're here with Sud Bhatija, co founder and COO of Spot AI. Welcome. Thank you for having me. I wanna talk a little today about the idea of physical AI. We've all hear about AI. We know it's on our phones. We know it's on our devices. We're using them on computers. How do you guys think about the idea of physical AI? If you look at the composition of our economy, there's a bunch of work that happens in the digital world, on screens, at a desk. But an overwhelming majority actually happens in the physical world where you're walking around, moving things, interacting with people, interacting with objects. Physical AI is the application of AI to real world settings where people have to move around to do their work. The big difference between physical and digital AI is that in order to operate in a physical space, we need to be able to see. So physical AI requires you to use not just text, but video and audio to be able to operate in that world and then actuate it and create outcomes. How do you actually change video content? I mean, it's like, you think about cameras have feeds. Right? There's gotta be some latency there. If you are using if you had to interpret video at the same speed that you would interpret a prompt in the digital space, how is that possible? What makes it possible actually is edge computing. So video is actually, as you probably guessed, very voluminous. Moving a large amount of video to the cloud is expensive at best and impossible or impractical at worst. So a lot of it comes down to being able to index and analyze the video right where it's produced. And that's something that we've been working on for several years now. What's been happening with GPUs and NVIDIA haven't just benefited compute in the cloud, but they they have lower part versions that have actually benefited compute at the edge. And that's a critical part of the stack if you wanna be able to analyze and understand video and make it usable in both a timely way and a cost effective way to benefit the use case. So you've got AI that can read video essentially live if it is fed into a local server, I'd assume. That's right. Right? You still have cloud computing that is being done, I would imagine, to train. Or Right. Okay. So think of it as, you know, the small brain and the big brain. The small brain's at the edge. What it does is it pulls in the video from the cameras and does the indexing of video and does the basic analysis of video. So for many low latency applications, that's what you use, where you need an instant response to alert somebody, to actuate a machine, to trigger a sensor. But then for bigger and more complex analysis is where you actually move video to the cloud. And being able to seamlessly operate between both of those is key to delivering value in this market. Over time, we actually see the edge becoming more important with the ability to actually run video language models on the edge itself. And models are consistently getting better in smaller packages, and compute's getting bigger. So the edge is only going to become more important as you go forward with video. So there could be a million applications of this, I would imagine. Right? Absolutely. How are you guys utilizing it? We see, two biggest applications that we focus on where, video can be used in the physical world. One is security, and the other is operations. So security is that there are more than a million security guards in the US who walk around, whose primary job is to see if there's something happening and take action. Sometimes they're walking around. Sometimes they're sitting behind screens and just looking at a bunch of video feeds. We see video and video AI becoming a big part of automating that piece of work. And basically extending the ability to deliver security to places that were not practical before or where it's not cost effective to have a guard or where you don't want to put somebody in harm's way. The second category of video AI applications is on the operations side. In manufacturing alone, there are more than six hundred thousand people whose only job it is to observe and manage people on a manufacturing line and make sure they're doing what they're doing correctly. That's just one industry and one type of job. We think that a lot of that can be automated with video AI to actually deliver better outcomes for our customers because no one person can be everywhere at the same time. No one person has perfect tension. And no one person has all the information and all the context they need to be able to pick up on things that aren't going the right way. That makes total sense to me, especially from, like, the warehouse workers. Maybe there's a cable on the floor that someone might trip over, and it's gonna spot that. It might tag some things. There could be a lot of things that I worry about I'm wondering about security, though, because you guys work for enterprise. Right? -Typically. -Very much. So, okay. So you've got a massive warehouse, let's say. They typically have multiple, you know, security -TATIVE: I would imagine, yes, they are monitoring things, but the goal is also that there's someone physical there to then prevent something from happening if somebody were to try to break in. If you say, is your is your value prop that you can have less security guards because AI is monitoring it, and then that one security guard just needs to get over to that spot faster? It's pretty much that you need less security guards to go to the same outcomes, and here's how it In most cases, if there's somebody doing something they shouldn't, ninety percent of the time, they stop doing what they're doing if they know that somebody is watching them and they're called out on it. So what the AI does is that it actually understands when there's somebody coming in. And it can understand very complex analysis. So let's say somebody is coming into an electric vehicle charging station and is trying to steal copper. The signatures of somebody trying to steal copper are pretty complex. Our AI can actually detect and understand that and differentiate between somebody who's doing that versus somebody who's an EV owner who is trying to get out of the car and charge their vehicle. And then what it does is that it actually can respond automatically to them without human intervention. Via speaker Via speaker, via lights. And it can do it in a contextual way so that the person actually thinks there's a human under the side, but there isn't. Ninety percent of the time, that solves the problem. And that's how we reduce loss for, a lot of large enterprises. And then the ten percent of the time, you need an escalation to the cops. In in that scenario, it's not the human guard walking there. It's the police. In which case, what AI can do is that it, it escalates it to a human in a a different location, and then they call the cops. So that's how we improve security outcomes. So how do you prevent because, again, the latency coming back to it, like, somebody is coming into a screen, there's gotta be, I would imagine, one agent that's saying, uh-oh. Like, okay, someone's coming in. Let's trigger some kind of recognition. Does this look like sketchy behavior or not? Is there another agent that is then checking that against, like, some kind of guardrails to say, oh, yeah, hoodie, whatever the things are? Yeah. We actually use a pretty complex system of multiple agents that one can trigger suspicious events that can evaluate it, then another set of agents that evaluate it deep and remove false positives. The biggest problem that you have in most of these systems historically is that you have all these false positives. So you end up with a plethora of alerts that don't really make sense. And the problem with that is if you want to actually trigger an action, you can't have that happen on alerts that aren't real. So there's another agent that actually evaluates that to make sure that those are actually right. And that's how we get really good precision recall to be able to be effective. Otherwise, you have this loudspeaker playing, and there's nobody really there because there was a cat walking around. And then suddenly, it sounded the alarm. So we do that. And then we have other agents that take care of even more complex tasks where, let's say, on the operations side, you want to analyze if people are following a standard operating procedure. Those are very complex things, right? You have two hundred steps. It involves a very deep understanding of what the parts of the manufacturing line are, who's doing what with what piece. And then so you have this this third category of agents that then operate in the cloud that are able to understand all of those things and then be able to almost function as, like, a manager would be able to evaluate performance against them and suggest action. It would be impossible for a human to do that. Right? I mean, with the you think about the Sort of a of some auto manufacturing facility or whatever it is. Exactly. It would be very impossible because, one, the steps are very large in number. Secondly, they are happening at multiple places over the facility itself. And then they're happening in multiple facilities. No one person can see all those at the same time, even if they were looking at cameras. Secondly, the processes are so nuanced. And what we find with larger enterprise customers is that different locations do very differently because the machinery is a different type, or one is slightly older than the other. There is no one person in any of these organizations that has the collective context of the physical ontology of these businesses look like. That's what AI does. So the way we like to tell our customers is AI is your best employee. It never leaves. It doesn't get tired. It knows everything about every corner of your facility so that if people come and leave, that's fine. But you have AI that fully understands your business that you can then both evaluate people on, and then also you can use or train new people who need to come and do the job. You guys started in twenty twenty or twenty eighteen. And so you had products. You were going to market before AI was hot, before big LLMs came out. Like, what happened there? Was there a this massive, like, oh, ****. What are we gonna do moment? Are we gonna pivot? Is was it a slow burn into it? Did you completely pivot the strategy of go to market? The moment was a moment that we expected to happen. We just didn't know when. And because right from the beginning, the thesis was always that video AI can help codify human visual judgment. It can you know, people can't be everywhere at the same time. So there is a lot of value to build products that can see what a human sees and act like a human would. So we built our architecture for that right from the beginning. We became camera agnostic because the chips on cameras are really small. But then an NVIDIA GPU was this big. So we knew we needed to have a centralized app appliance, which took all the video feeds and analyzed all the video. So we built it for that. And then we also knew that there would be this juxtaposition of edge and cloud, which would allow us to load balance compute and storage. So we built our architecture and our our fabric to be able to do that. So when the AI models inflected, we were right there with the right architecture and the right system to be able to do it. We had, at that point, itself more than a thousand customers. So we understood the use cases which had value that time? Enterprise? Enterprise, like, larger customers. And then, we knew what the use cases were. We knew where there was value. So at that point, it was just about plugging you know, building the right applications that met, what the models could then do. Did you see any change in customer, I'd say, reaction to the idea of before LLMs because before LLMs, there wasn't this kinda hype about how scary AI could be and hallucinations and all this stuff, right? So you didn't really have that. So if you were building before then, you're just like, this is going to help make sure processes stay in place, security, well, okay, great. But now you start introducing LLMs into it. And there's now everybody's face who's in that warehouse and everything they do is being absorbed by an LLM and process. Was there any change in how they you know, in their posture, in their secure in their maybe policies internally of, like, privacy policies of the organizations or anything? I think face recognition existed well before LMS did. So there were already many organizations who said, yeah. We're okay with it, And we want to use it in some way. And we're not okay with it. And we don't want to use it in some way. And then we built our product to allow for that. I think what happened after LLMs was customers started realizing that AI and video AI can do a lot for them. And so suddenly, we had customers coming and telling us, we have these three business problems. Can you solve this for me with AI? So it was a change in the conversation. Now the concern that then started showing up on on the ground with a lot of these customers, especially the people who are actually working is, hey. Will AI take my job? And what we found is that the organizations that are the most successful at applying AI and deploying it and actually getting adoption are those that look at it in a positive way to train their people and help them be better at what they do. In fact, we had some organizations that actually created incentives programs around the AI that we were offering and the products that we were offering to be able to incentivize people to learn from it and use it as a tool to get better and actually pay them more based on that. So so and so there's a lot of messaging involved, and there's a lot of change management involved. But I think on the whole organization, it just became a lot more open to what they could do with AI. As I think about this and I was as I was doing some research, I was thinking, man, how soon is it before this is on my Ring camera at home? Because the amount of alerts I get that are it's a tree blowing In the wind is frustrating, you know? And every time I log in to look at it, it's like, battery's being used and all this stuff. Like, is there like, are we gonna get obviously, with I'm not gonna have a server On prem on my house to to do all this. How far do you think we are from this technology being able to be so small, you know, to where it could be on cloud based, you know, personal home security? I think they're a bit away from that just because of how big the models are, and, you know, it also depends on how complex your use case is. So we do see the ability to be, to have a server and appliance actually make a very big difference at least for the next five years. Because a couple of things are happening. One is the models are getting better, but then the hardware is though, that you can put on these servers is also getting larger. So at any point of time, especially for enterprises, the most complex application will always be on the server first before it goes on the camera. Now what you'll increasingly have and you're already seeing this with Ring. You know, you saw their Super Bowl campaigns. You already see the them being able to deliver better and better applications. But the camera applications will never be at the cutting edge foreseeably. Okay. So my marketing brain always goes to how could this be used in a way that the way, background in marketing, I am the worst when it comes to writing. I don't watch any ads. I have no ads on anything. I'm not on any of the socials, so I don't see any of that. I reject cookies at every moment I I get an opportunity But I always go to, like, how are how is this gonna be, you know, commercialized in other ways? And I I think about, you know, will there be there's street cameras outside. People are walking around. Will there be instances where it's watching people and saying this person, looks thirsty because they've got Doritos in their hands. Let's serve them up an ad for water. You know, or less less time they get on ChatGeeBT, there's an embedded, you know, natural language ad in there for something. Like, do you think that this type of video to video AI based technology that can exist in the the physical world will be used for that type of purpose anytime soon? I I think it can at some point, you know, almost, you know, minority report style Yeah. The or iRobot style where, you know, you walk across, and then it can see who you are, and it can serve you the ad right there. That will happen at some point. I think and I can't tell you when because the technology is progressing so fast, particularly on the video side. Yeah. So but I expect that you'll start seeing that in the next three to five years. Wow. Yeah. It's crazy. Okay. Speaking of future, it's twenty thirty. What's different? I mean, in terms of do we have you know, is are are warehouses operated by robots that are now I mean, they're, what, Elon's sunsetting, the x and the s to build, you know, robots in those factories, or is it gonna be robots in there with cameras on them and your technology will be in the robots versus up on, you know, up in the corner? Will there be mechanical forklifts that are all AI enabled, you know, without people? Like, twenty thirty was not that far away. Where do you think it's going? I think I think the the physical AI, revolution will happen in multiple ways. Robotics is definitely a big part of that. But there are a lot of jobs that robots that humans can do that robots will do, particularly dangerous jobs, particularly jobs that are physically intense. So you're going to see a lot more proliferation of that. I do think that we're to live in a world where, in those cases, your cameras are on the robots itself. And video in that sense is exploding because cameras are now going into so many different places. But there's still going to be a world where, a, you need to observe and manage those robots the same way you observe and manage humans, which is why we see a second category in physical AI, which is this embodiment that sits in and around you with the cameras that can see everything. And then that helps orchestrate factories. And that helps orchestrate these large facilities. So you want to see that second category as well. And then you're going to have some applications where you definitely, you know, have humans and where you still can't take the human out of the loop. And, you know, you have humans and robots working together. So I also don't think it's going to go full bore right out of the gate, but it's gonna be a spectrum. The one thing I tell you for certain is that the number of cameras that you see deployed anywhere is just gonna keep it's been growing reasonably over the last several years, but that number is just gonna explode. Well, Deepa, I I have a Tesla. Self driving, I'm like, I'm very I'm still paying attention. I got on a Waymo for the first time, sat in the back seat, felt fine. And, like, I don't understand why other than there are cameras everywhere in that thing. Exactly. And I'm like, I I, you know, I I almost feel like, in some ways, when I first saw it, was like, why aren't they hiding these things? And in another way, I'm like, well, duh. Of course, not hiding them because I I I feel more safe Exactly. Seeing them. Yeah. So I guess another question on that is, now that the amount of cameras expand exponentially, like, does that just drive compute power way up? Because now you have way more data feeding in? You you're gonna have way more data feeding in. You're gonna have a lot more compute at the edge. You're going have a lot more information that you're absorbing. And I think the video, as I say, is the richest data source. A picture is a thousand words, and a video is thousands of images. So so I think the amount of data that we get about the real world is going to grow it is already growing. It's gonna grow even more automatically. So compute, storage, applications are just gonna increase. And I think you you brought this to the point about you are now getting okay with it. And I think that's increasingly going to happen where people are people are now you generally know that in in most public spaces, you are on camera. And we are seeing in the enterprise as well people becoming increasingly okay with that. Well, not only that. I've been rejecting cookies because I don't wanna get ads served to me. And here I am. I'm now willing to probably wear something to allow Chatchy BT or Claude to listen to my whole day That's right. Because it's going to be so helpful. That's right. An ad that that is, you know, probably something that I might be interesting is not helpful to me. Right? But For sure. Actually having AI that understands that my day, where I don't have to feed it the knowledge all the time, that's that can be really helpful. And so you just open up to the ability to say, okay. I'm willing to sacrifice that privacy. And I I think what what's gonna be really important is there's gonna be a need for a lot more public discourse around this because we're not gonna get to this end state, which is idyllic and perfect. There are gonna be bumps along the way. And I think there's gonna be a lot of conversation about, well, what's okay, what's not okay. Right? The same way in the in the in the digital world, you have cookies and permissions for what you do. There's gonna have to be a whole set of conversations for what that looks like in the physical world as well. So, I mean, I think it's gonna be really interesting times. We're sort of in very unchartered waters, and there's, you know, no sign of that stopping anytime soon. And we're not that far away. Not that far away. Alright. Soothe, thank you so much for coming by. Appreciate it. For having me. Appreciate it.

Is Physical AI the Next Frontier for Enterprise? [Ft. Sud Bhatija, Spot AI]

Over a million security guards in the US spend their days watching things happen. Sud Bhatija, Co-Founder and COO at Spot AI, is building the system that makes most of that unnecessary. In this episode, he breaks down how physical AI works at enterprise scale — from the edge-cloud architecture that enables real-time video analysis, to a three-tier multi-agent system that cuts false positives down to the point where automated responses via speakers and lights resolve security incidents 90% of the time with no on-site human intervention required.

Sud also gets specific on why having 1,000+ customers before the LLM wave gave Spot AI a structural advantage when models inflected — and why the organizations seeing the highest AI adoption aren't the ones with the best technology. They're the ones paying workers more for learning to use it.

Topics discussed:

- The "small brain / big brain" edge-cloud architecture for low-latency video analysis

- Three-tier multi-agent system: detection, false positive removal, and cloud-based SOP evaluation

- Automated speaker and light response that resolves security incidents 90% of the time without on-site intervention

- Why 600,000+ manufacturing line observers represent the clearest near-term target for video AI

- How 1,000+ pre-LLM customers shaped which use cases Spot AI prioritized when models inflected

- Tying pay increases directly to AI adoption: the incentive model driving ground-level buy-in

- Why AI becomes the only entity that holds the full "physical ontology" of a multi-site enterprise

- The coming need for physical-world consent frameworks equivalent to digital cookies and permissions

Spot AI builds physical AI systems that give enterprises real-time intelligence across their facilities through video. Where most AI operates on text and screens, Spot AI processes live video feeds from existing camera infrastructure to automate security response, monitor operational compliance, and capture institutional knowledge that no single person in an organization can hold. Under the hood, Spot AI runs a distributed edge-cloud architecture where a local appliance handles low-latency decisions at the source, while a layered multi-agent system in the cloud evaluates complex tasks like SOP compliance across multiple facilities simultaneously, resolving 90% of security incidents automatically with no on-site human intervention required.

If you look at the composition of our economy, there's a bunch of work that happens in the digital world, on screens, at a desk. But an overwhelming majority actually happens in the physical world where you're walking around, moving things, interacting with people, interacting with objects. Physical AI is the application of AI to real world settings where people have to move around to do their work. The big difference between physical and digital AI is that in order to be able operate in a physical space, you need to be able to see. So, physical AI requires you to use not just text, but video and audio to be able to operate in that world and then actuate it and create outcomes.

Moving a large amount of video to the cloud is expensive at best and impossible or impractical at worst. So a lot of it comes down to being able to index and analyze the video right where it's produced. And that's something that, we've been working on for several years now. What's been happening with, GPUs and NVIDIA haven't just benefited compute in the cloud. They have lower part versions that have actually benefited compute at the edge.

Face recognition existed well before LLMs did. So there were already many organizations who said, yeah. We're okay with it, and we wanna use it in some way. And we're not okay with it, and we don't use it in some way. And then we built our product to allow for that. I think what happened after LLMs was customers started realizing that AI and video AI can do a lot for them. And so suddenly, we had customers coming and telling us, we have these three business problems. Can you solve this for me with AI? So it was a change in the conversation. Now the concern that then started showing up on the ground with a lot of these customers, especially the people who are actually working is, hey. Will AI take my job? Hi.

What we found is that the organizations that are the most successful at applying AI and deploying it and actually getting adoption are those that look at it in a positive way to train, their people and help them be better at what they do. In fact, we had some organizations that actually created incentive programs around the AI that we were offering and the products that we were offering to incentivize people to learn from it and use it as a tool to get better and actually pay them more based on that.

How far do you think we are from this technology being able to be so small, you know, to where it could be on cloud based, you know, personal home security? I think they're a bit away from that just because of how big the models are, and, you know, it also depends on how complex your use case is. So we do see the ability to be to have a server and appliance actually make a very big difference at least for the next five years. Because a couple of things are happening. One is the models are getting better, but then the hardware is though, that you can put on these servers is also getting larger. So at any point of time, especially for enterprises, the most complex application will always be on the server first before it goes on the camera. What you'll increasingly have, you already see them being able to deliver better and better applications, but the camera applications will never be at the cutting edge foreseeably.

Speaking of future, it's twenty thirty. Where do you think it's going? I think the the physical AI revolution will happen in multiple ways. Robotics is definitely a big part of that. But there are a lot of jobs that humans can do that robots will do, particularly dangerous jobs, particularly jobs that are physically intense. So you're going to see a lot more proliferation of that. I do think that we're to live in a world where, in those cases, your cameras are on the robots itself. And video in that sense is exploding because you know, the the cameras are now going into so many different places. There's still gonna be a world where, a, you need to observe and manage those robots the same way you observe and manage humans.

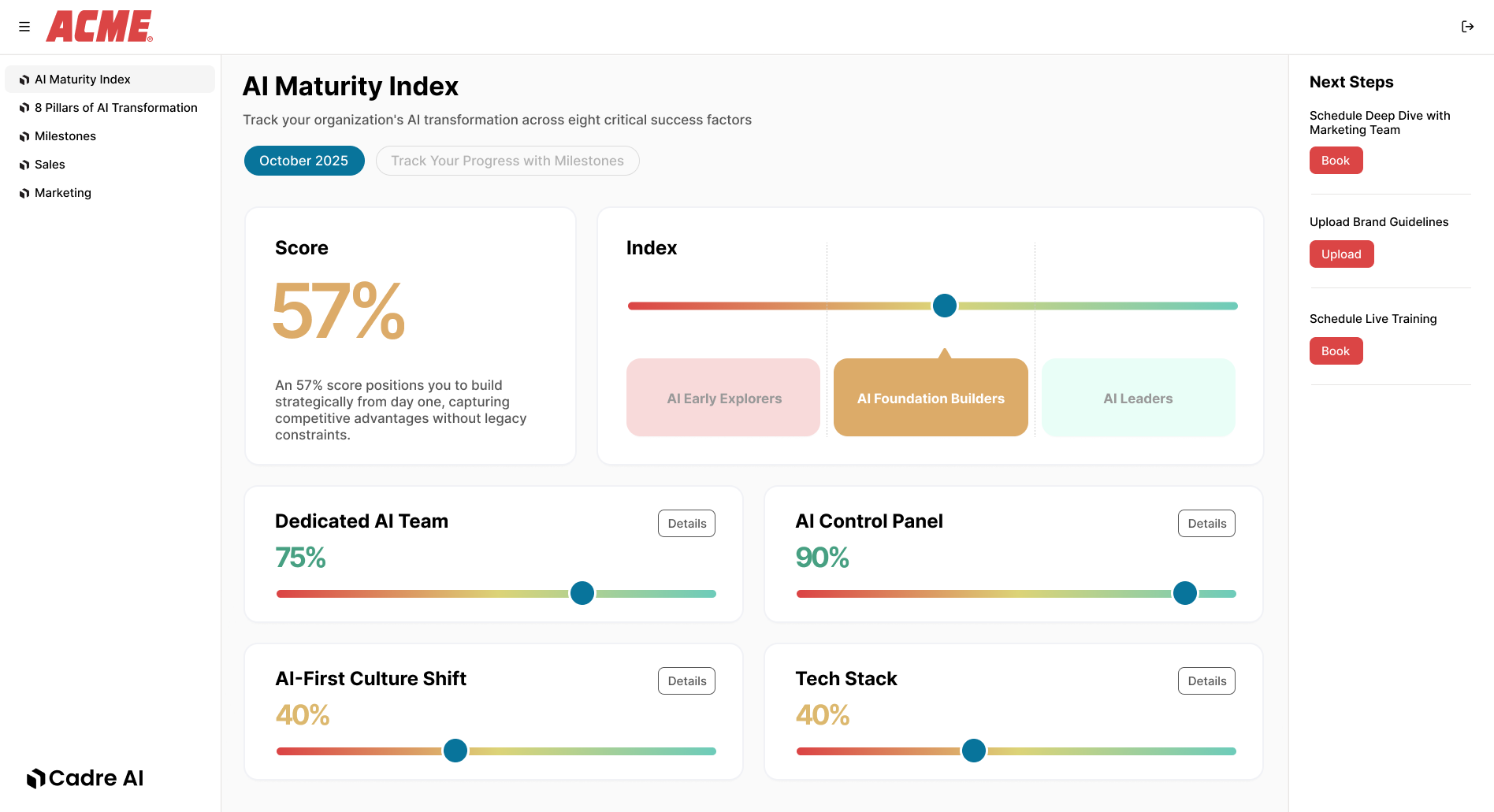

Track your AI results